Leaderboard Mechanics Quietly Decide Your AI Model Trust and the Math Is Brittle

From drug discovery to code generation, benchmark leaderboards have become the scorecard for AI progress, but a rash of contamination scandals, domain mismatches, and license shell games is forcing the community to confront what these rankings actually measure.

aitidbits.ai

aitidbits.ai

Last month, Insilico Medicine quietly expanded MMAI Gym, its platform for benchmarking generative AI models against real drug discovery tasks, adding what it calls benchmark leaderboard portals for scientific research. The press release landed on a Tuesday and made almost no noise in the mainstream AI press. You can guess why: no chatbot topping a reasoning benchmark, no screenshot of a model writing a poem about gradient descent. Just a biotech company building the kind of domain-specific evaluation infrastructure that the field has been saying it needs for years, and very few people outside computational chemistry noticed.

It matters because Insilico is doing something the dominant general-purpose leaderboards do not: tying benchmark performance directly to a workflow that costs real money when it fails. In drug discovery, a false positive on a target-binding prediction does not mean a lower score on the next leaderboard refresh. It means months of lab work and seven figures down the drain. This is the gulf between most AI leaderboards and the tasks they claim to measure, and it is wider than the scorecards suggest. When Insilico announced the MMAI Gym expansion, it described leaderboards covering target identification, molecule generation, and clinical trial forecasting. Each leaderboard ties to a specific, falsifiable outcome. That is rare.

Most of the leaderboards driving model selection decisions in 2026 were not built with that kind of accountability. They were built to produce a ranking. The ranking generates attention. The attention generates downloads, citations, and enterprise contracts. The mechanics that produce that ranking, however, are under more scrutiny than the scores they spit out.

The contamination problem is not going away

In March, blockchain security firm OpenZeppelin published an audit of OpenAI's EVMbench, a benchmark designed to evaluate how well AI models find vulnerabilities in Ethereum smart contracts. What OpenZeppelin found was not a bad model. It was a bad dataset. The EVMbench test data, according to the audit published by CoinTelegraph, contained training data leaks and at least four invalid high-severity vulnerability classifications. Models were not reasoning about smart contract security; they were, at least partially, memorizing vulnerability reports they had already seen during pretraining. The benchmark was measuring recall, not capability.

This is the contamination problem in one crisp case study. It is not a new problem. Researchers at Abacus.AI, NYU, and several other institutions have been documenting benchmark contamination since at least 2023, and the 2024 release of LiveBench was a direct response: a benchmark that refreshes its test data monthly to stay ahead of models that have ingested everything on the public internet. Yet the problem persists because the incentives run the other way. A lab that releases a model with a suspiciously high score on MMLU-Pro or SWE-Bench gets coverage. A lab that runs an exhaustive decontamination audit and publishes a lower, more honest score does not.

The Chinese open-weight wave has made this tension unavoidable. In late April, Z.ai's GLM-5.1 and Moonshot AI's Kimi K2.6 both posted scores on SWE-Bench Pro that surpassed OpenAI's GPT-5.4 and Anthropic's Claude Opus 4.6. The headlines wrote themselves: open-weight models had toppled the big three. But as several fine-tuners I spoke with pointed out, SWE-Bench Pro measures a specific kind of repository-level patch generation that correlates loosely, at best, with the kind of debugging most working developers actually do. The leaderboard rewards models that are good at the test, and the test looks like a GitHub issue with a known resolution path. That is a real skill. It is also a narrow one.

You see this pattern everywhere once you start looking. A model labeled for scientific reasoning tops a leaderboard built from textbook problems with clean, single-step answers. A model labeled for code generation wins on a benchmark where most test cases appear, in near-identical form, in public repositories predating the model's knowledge cutoff. The leaderboard tells you who won. It does not tell you whether the game was fair.

What the leaderboard does not measure

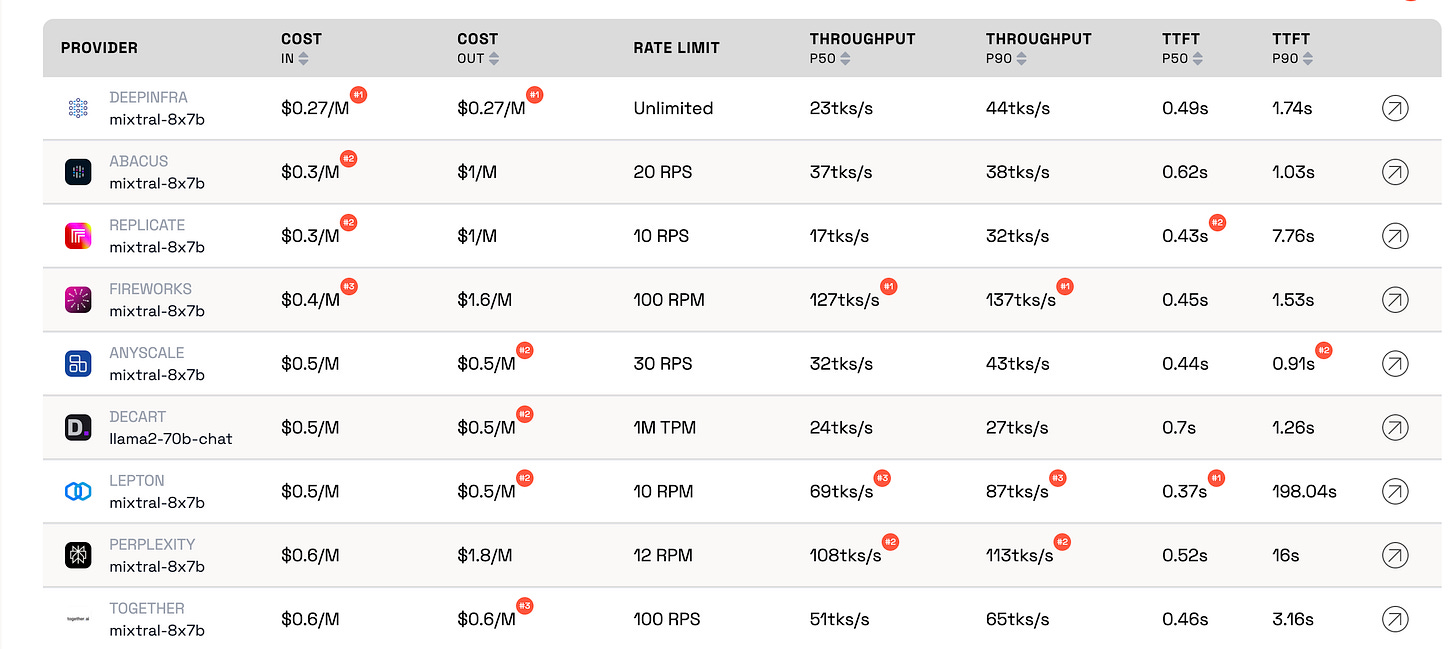

The new generation of meta-leaderboards launched in spring 2026, including Klu.ai, BenchLM.ai, and PromptXL, attempt to address this by comparing models across multiple dimensions: quality, speed, cost, and context-handling. That is an improvement over a single numeric score on a single static dataset. But it introduces a new problem. Once speed and cost enter the ranking, the leaderboard is no longer measuring capability in the abstract. It is measuring a specific deployment profile. A model that is cheap and fast but wrong is not a bargain, and a model that is expensive and slow but right is not a failure. The leaderboard cannot know your tolerance for either tradeoff.

This is where domain-specific benchmarks, like Insilico's MMAI Gym, become instructive. When the evaluation is tied to a downstream task with a measurable economic outcome, the leaderboard stops being a popularity contest and starts being an engineering tool. Insilico is not asking whether a model is good at biology in general. It is asking whether the model can identify a binding target that will survive wet-lab validation. If the model cannot, the score drops. There is no partial credit for a plausible-sounding explanation. That design principle, outcome-anchored evaluation, is what most general-purpose leaderboards lack, and it is what keeps them brittle.

The license question compounds the measurement problem. Many of the open-weight models now climbing the leaderboards ship with restricted-use clauses that would make a corporate legal team flinch. You can download the weights. You can fine-tune them. But read the fine print and you will often find language that prohibits commercial use above a certain revenue threshold, or requires attribution that is incompatible with your deployment pipeline, or reserves the right to revoke access entirely. These are not open models in the OSI sense. They are weights under contract.

The leaderboard does not surface this distinction. A model with a permissive Apache 2.0 license and a model with a bespoke restricted-use agreement sit side by side on the same ranking table, separated only by their scores. The maintainer running a production pipeline cannot tell, from the leaderboard alone, whether the model they are about to integrate will survive their next compliance review. That is a failure of presentation, not of measurement, but it is a failure all the same, and it sends download numbers to models that should not be downloaded for half the use cases they end up in.

A benchmark that is not tied to a specific, falsifiable outcome is not an evaluation. It is a vibe check with better marketing.Konstantin Olufemi, TechReaderDaily

The academic groups running independent evals have been saying versions of this for years. What has changed in 2026 is that the commercial stakes are high enough that sloppy leaderboards now carry concrete costs. A biotech firm that picks a protein-folding model based on a general-purpose leaderboard ranking, rather than a domain-specific benchmark with wet-lab validation, is making a bet with the company's pipeline. A fintech startup that integrates a coding model because it topped SWE-Bench Pro, only to discover it cannot handle the proprietary internal libraries their stack depends on, learns the same lesson at a smaller but still painful scale.

The OpenZeppelin audit of EVMbench revealed something else worth examining. The four invalid high-severity vulnerability classifications the auditors found were not edge cases. They were outputs the model had generated with high confidence, flagged because the benchmark dataset had been constructed without sufficient domain review. The people who built EVMbench were not smart-contract security experts. They were AI researchers trying to build a challenging test. The result was a test that looked hard, produced clean rankings, and rewarded models that had memorized the wrong things.

This is not a criticism of the EVMbench team specifically. It is a structural problem. Building a good benchmark requires deep domain expertise in the thing being measured, not just in machine learning evaluation methodology. The best-cited benchmarks in NLP were built by linguists and cognitive scientists, not by teams optimizing for paper acceptance. As the AI field expands into high-stakes domains like drug discovery, legal reasoning, and cybersecurity, the gap between who builds the benchmark and who understands the domain is becoming a liability.

Insilico Medicine's approach, building leaderboards with pharmaceutical industry partners who bring the domain expertise and carry the cost of false positives, is one model for closing that gap. It is slow, expensive, and does not produce the kind of viral scoreboard moments that drive TechCrunch headlines. But it produces rankings that a research director can actually use to allocate budget. You might notice that the most useful benchmarks in any field, the ones that stick around for decades, share this property: they were built by the people who need the answers, not by the people who need the citations.

The Chinese open-weight surge adds another wrinkle. GLM-5.1 and Kimi K2.6 are genuinely impressive models, and the teams at Z.ai and Moonshot AI have done serious engineering work that deserves recognition. But the narrative that open-weight models have caught up to closed models on coding benchmarks needs to be read alongside the fact that SWE-Bench Pro, like most public benchmarks, is a fixed target. When you train on the entire public internet and fine-tune aggressively on GitHub repositories, you are going to do well on a benchmark constructed from GitHub issues. The question is not whether the score is real. It is whether the score transfers.

Transfer is where most leaderboards go quiet. A model tops a coding benchmark on Tuesday. On Wednesday, a startup integrates it into their CI pipeline and discovers it cannot handle their monorepo structure, or their internal naming conventions, or the subtle dependency graph that every junior engineer learns in their first month. The leaderboard score was accurate. It was also irrelevant to the actual workflow. This is not the model's fault. It is the leaderboard's fault, or rather the fault of an ecosystem that conflates benchmark performance with capability in a way no serious engineering discipline would tolerate.

What is a buyer supposed to do? The honest answer, and the one you will hear from maintainers who have been doing this long enough to have scars, is that a leaderboard is a starting point for your own evaluation, not the end of one. You take the top three models on a benchmark relevant to your domain. You run them on your own data, with your own metrics, under your own latency and cost constraints. If the ranking holds, great. If it does not, you have learned something the leaderboard could not tell you. This is obvious advice, and almost nobody follows it because it is expensive and slow.

The Insilico MMAI Gym story matters because it demonstrates what happens when the evaluation cost is built into the platform rather than offloaded to the user. The leaderboard portals they have built do not just show a score; they show the score against a specific assay, a specific target family, a specific clinical endpoint. You can drill down into the exact condition under which a model succeeded or failed. That level of resolution is what turns a ranking from a marketing artifact into a decision tool.

Until more fields adopt that approach, the leaderboard economy will continue to reward the wrong behaviors. Labs will optimize for the test, not the task. Benchmarks will leak into training sets. Scores will rise while real-world reliability stays flat. And the press will keep writing headlines about which model is number one, because that is the story the leaderboard is built to tell. Watch for the benchmarks that tie their rankings to something falsifiable and expensive. Those are the ones that will still matter in three years. The rest are just scoreboard animation.