AI · Open Weights

AI · Open Weights

Google’s decision to release Gemma 4 under the permissive Apache 2.0 license reshuffles the open-weights landscape, putting immediate pressure on Meta’s Llama and any other lab still shipping models with restrictive usage terms.

May 12, 2026

·

9 min

AI · Evaluation

AI · Evaluation

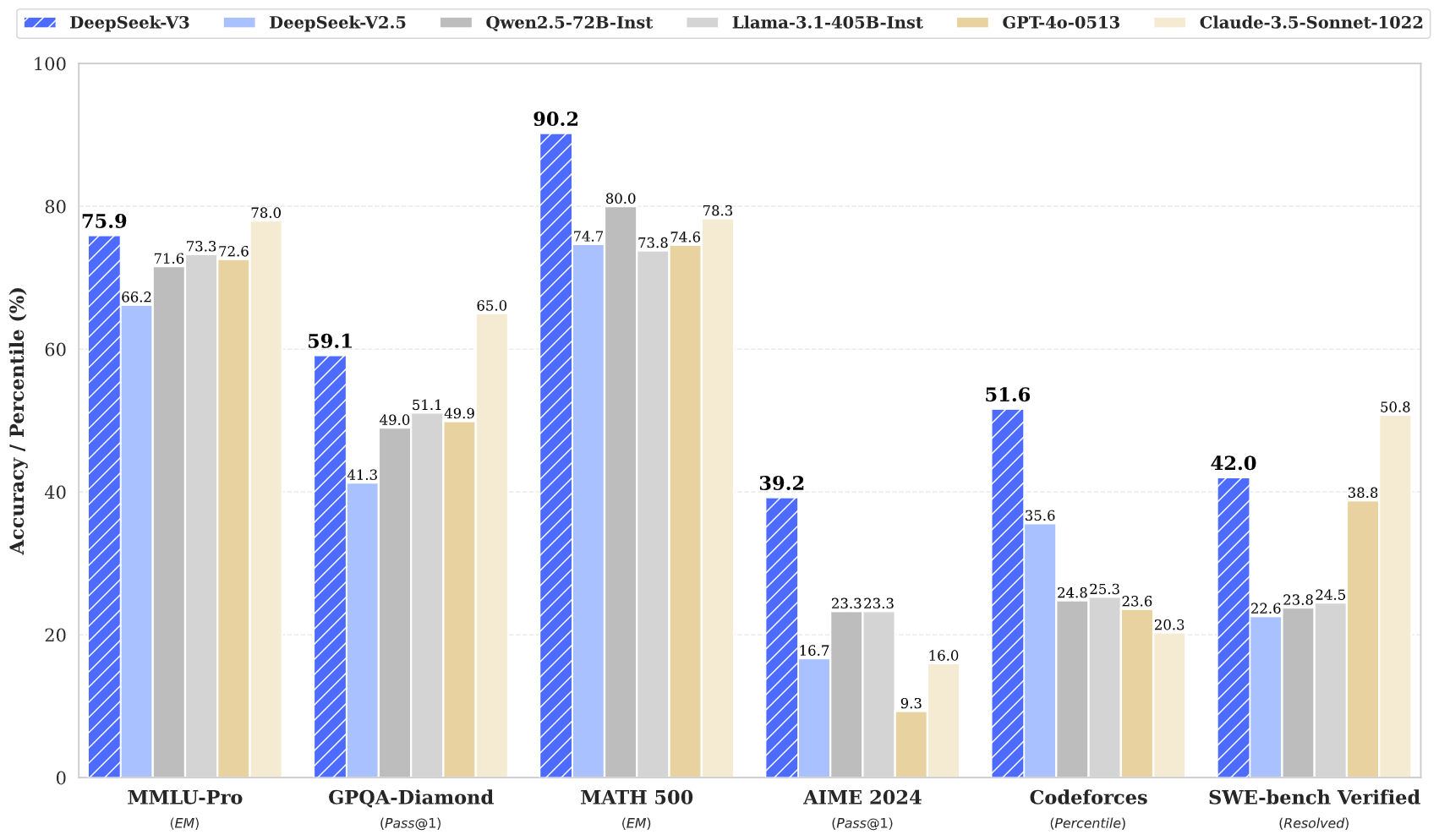

From SWE-Bench Pro to the Stanford AI Index, AI benchmark leaderboards now drive billions in investment and geopolitical posturing, yet the mechanics behind the numbers are more fragile than the scores suggest.

May 11, 2026

·

9 min

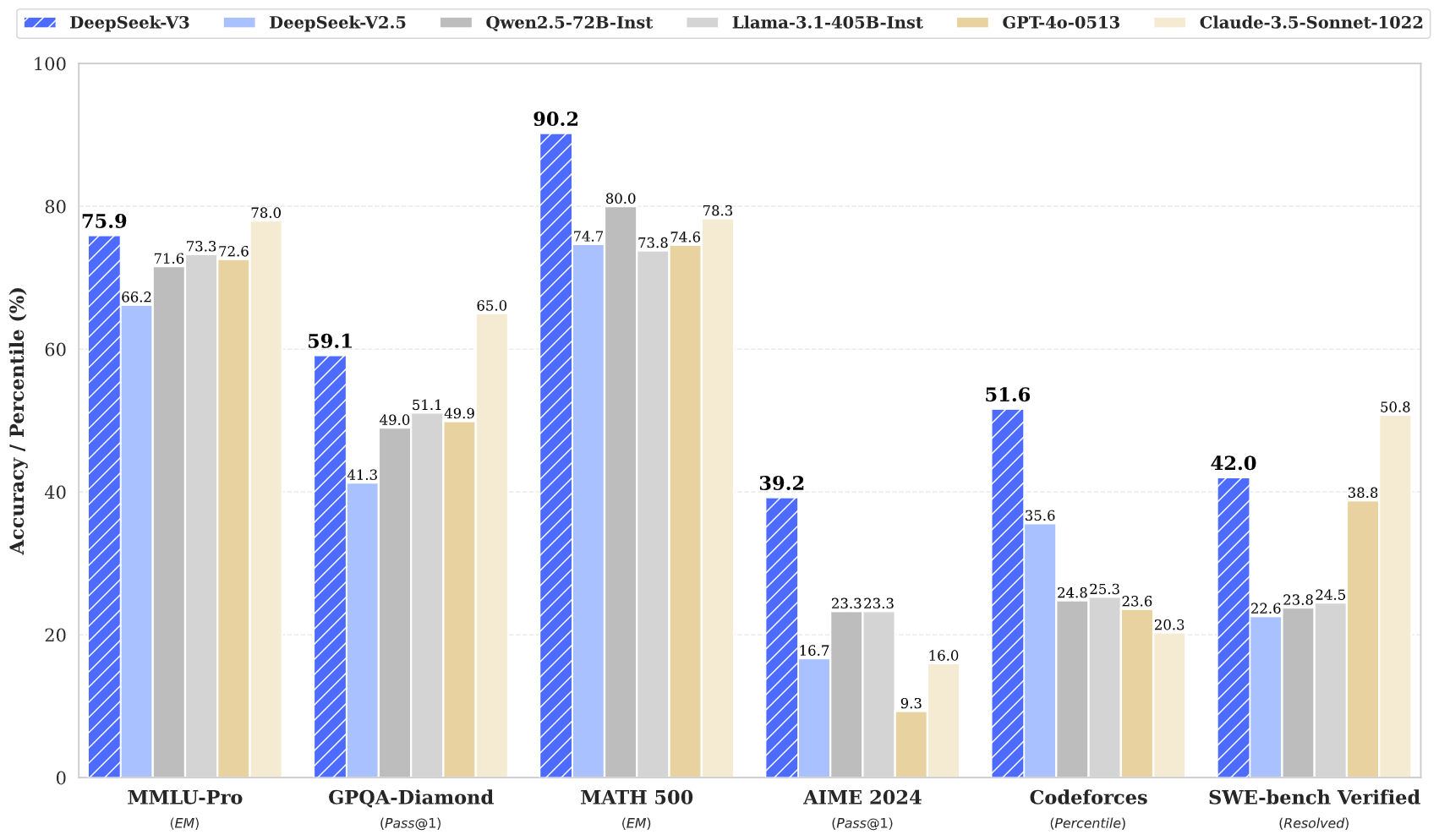

AI Desk · Benchmarks & Evaluation

AI Desk · Benchmarks & Evaluation

From drug discovery to code generation, benchmark leaderboards have become the scorecard for AI progress, but a rash of contamination scandals, domain mismatches, and license shell games is forcing the community to confront what these rankings actually measure.

May 9, 2026

·

11 min

Open Weights · Evaluation

Open Weights · Evaluation

As the gap between leaderboard-topping scores and deployable models widens, researchers are questioning whether these benchmarks actually measure what matters for real-world AI performance.

May 9, 2026

·

3 min

AI · Open weights

AI · Open weights

No press release, no blog post, no Twitter thread. Just a Hugging Face commit and a one-paragraph model card. The license terms are the part you should actually read.

May 8, 2026

·

1 min

AI · Open weights

AI · Open weights

Three of the four open-weights releases of the past 30 days share the same five clauses. The Brussels Effect is now a license template, and developers should read it before downloading.

May 7, 2026

·

1 min

AI · Open Weights

AI · Open Weights