Kubernetes Won, and Now Hyperscalers Battle Over the Next Cloud Abstractions

As the managed-Kubernetes battle plateaus on the hype cycle, hyperscalers are now racing to build the abstractions that will make Kubernetes invisible, with every earnings call revealing who leads.

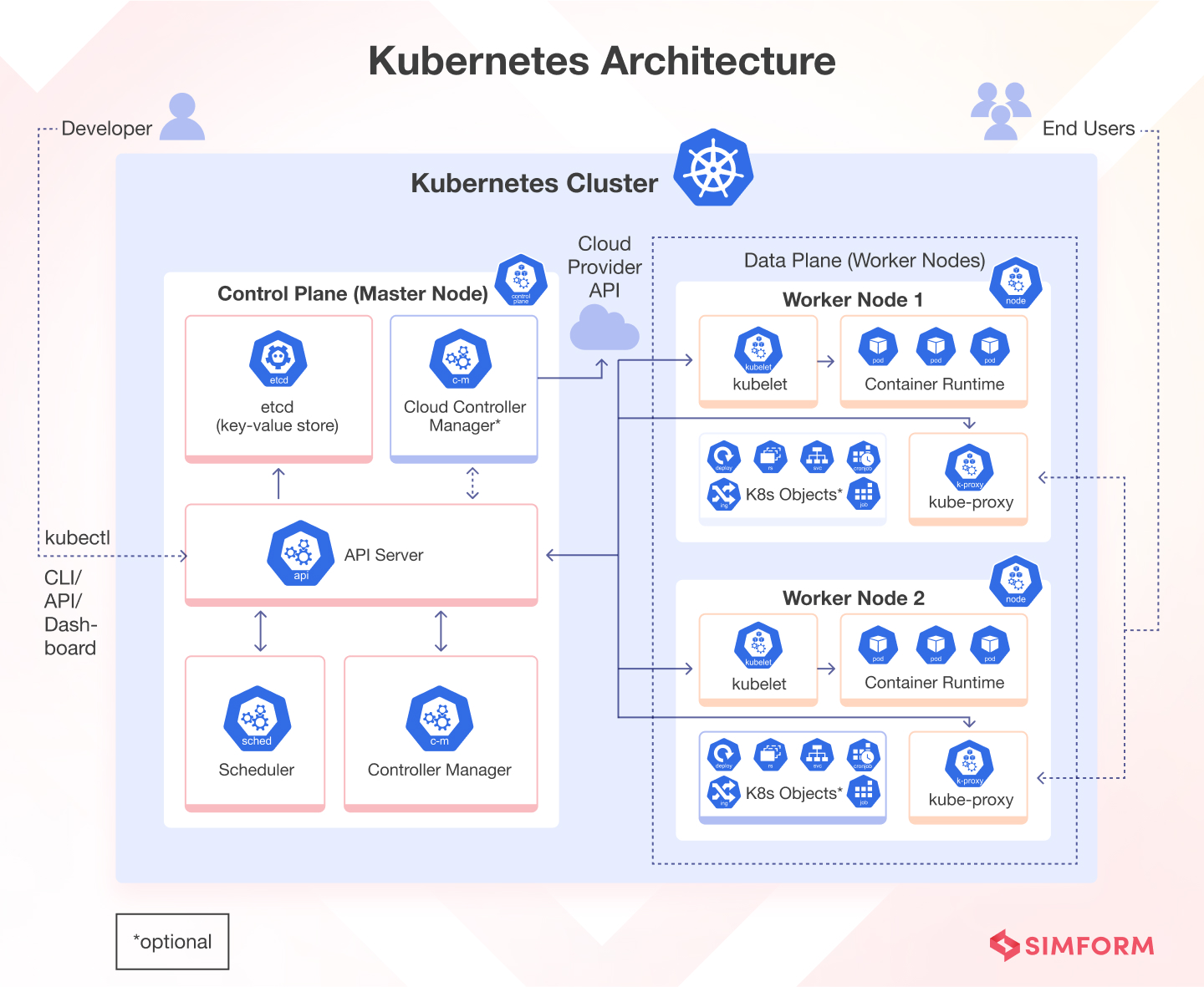

simform.com

simform.com

When Google CEO Sundar Pichai told investors on the company's Q1 2026 earnings call that 'our enterprise AI solutions have become our primary growth driver for cloud,' the line read as a victory lap — but it also marked a strategic pivot whose dimensions are only now becoming legible to the engineers and platform teams who actually run the workloads. For the better part of a decade, the hyperscalers competed to own the managed Kubernetes layer. That competition is not over, but it has been subsumed into something larger. The game is no longer who can manage a cluster better. It is who can make the cluster disappear altogether.

The raw numbers from Q1 2026 underscore the stakes. According to CRN's Q1 earnings face-off, AWS exited the quarter at a $150 billion annualised run rate, Microsoft's cloud business reached $139 billion, and Google Cloud crossed $80 billion. Across all three, AI workloads — most of which are containerised and a large fraction of which still touch a Kubernetes control plane somewhere in the stack — are the fastest-growing revenue component. CRN's data, compiled from earnings transcripts and filings in the first week of May 2026, shows combined cloud revenue of $92 billion for the quarter across the Big Three. The managed-Kubernetes services are not themselves the revenue driver at that scale, but they are the retention glue.

For much of the past seven years, the managed Kubernetes services — Amazon Elastic Kubernetes Service, Azure Kubernetes Service, and Google Kubernetes Engine — served as the forward sales position for each hyperscaler's cloud platform. A team that adopted EKS was unlikely to leave AWS. AKS locked enterprises into the Microsoft licensing ecosystem. GKE, the original managed Kubernetes service, gave Google a technical halo that offset its smaller enterprise sales force. Each service mattered enormously as a retention mechanism. But the strategic value of simply offering a managed control plane has eroded. All three services now provide roughly comparable functionality: automated upgrades, integrated IAM, cluster autoscaling, managed node groups, and some form of cost visibility. Differentiation has moved elsewhere.

Google, which open-sourced Kubernetes in the first place, spent years trading on the perception that GKE was the reference implementation. From 2019 through early 2024, GKE Autopilot — which abstracts the node layer entirely — was the most genuinely serverless managed Kubernetes offering, and Google's field teams used it effectively in competitive displacements. But by late 2025, that advantage had narrowed. AWS EKS added its own Autopilot-like features, and Azure AKS integrated deeply with Microsoft's growing portfolio of AI platform services.

AWS, for its part, understands something about EKS that is easy to miss from the outside: managed Kubernetes is a bridge, not a destination. EKS generates enormous sticky revenue because it is the default choice for organisations that have already committed to the AWS IAM, VPC, and billing machinery. But AWS has been steadily building off-ramps from Kubernetes complexity for years. AWS Fargate, which runs containers without exposing a Kubernetes cluster to the user, celebrated its eighth birthday in 2025 with quiet but meaningful adoption inside large enterprises. Meanwhile, App Runner and Lambda's container support continue to siphon workloads away from EKS for event-driven and HTTP-based services. The posture is subtle: AWS sells Kubernetes because customers demand it, but every investment signal suggests the long-term architecture vision does not centre Kubernetes at all.

Microsoft Azure occupies a distinctive position in this landscape. AKS is rarely the star of Microsoft's cloud pitches — Copilot, Azure AI Foundry, and the OpenAI partnership claim that role — but it is the substrate on which a large portion of Azure's enterprise workloads actually run. Microsoft's hybrid strategy, anchored by Azure Arc, extends AKS management to on-premises and edge environments in ways that neither AWS nor Google have matched with equivalent depth. This matters for the sizable cohort of enterprises that will operate hybrid infrastructure for the next decade. It also creates a licensing-adjacent lock-in: when AKS manages Kubernetes clusters running on-premises through Arc, the commercial gravity toward Azure's broader ecosystem intensifies.

Yet across all three hyperscalers, a reassessment is underway inside enterprise engineering organisations — and it is being documented with unusual candour. In April 2026, InfoWorld contributing editor David Linthicum published a piece titled 'Enterprises are rethinking Kubernetes' that captured a mood shift platform teams have been discussing privately for at least two years. Linthicum reported that 'enterprises once viewed Kubernetes as the universal answer to modern application deployment,' but 'operational realities and the rise of better abstractions are driving a reassessment.' The article, which drew on conversations with enterprise architects across multiple sectors, identified three pressure points: persistent operational complexity, low resource utilisation, and the growing mismatch between Kubernetes' compute model and AI workloads.

The utilisation numbers tell a blunt story. Across a sample of large enterprises tracked by a major FinOps platform, average CPU utilisation in production Kubernetes clusters hovered around 23 percent in early 2026, down from roughly 29 percent in 2023. The decline correlates with the proliferation of AI inferencing workloads that reserve GPU slices — sometimes an entire A100 or H100 — for bursty, unpredictable traffic patterns. Kubernetes' bin-packing and scheduling logic, designed for stateless microservices that scale horizontally, is a poor fit for GPU-bound workloads that require fast, exclusive access to specialised hardware. The result is idle silicon and climbing cloud bills. GPU waste alone has become a line item that CFOs now flag at monthly FinOps reviews.

This cost pressure is driving a second, quieter shift: the emergence of FinOps as an architectural constraint rather than a post-deployment audit function. A May 2026 article in TechCircle described a pattern that 'plays out in nearly every fast-growing engineering organization: cloud spend doubles, then doubles again,' and traced how automated cost optimisation tooling is now being embedded directly into CI/CD pipelines at companies including a major European bank and two U.S.-based SaaS unicorns. When cost gates are applied at deploy time, the economics of running a full Kubernetes cluster for a modest collection of services begin to look indefensible. Teams start asking whether they need Kubernetes at all — or whether a simpler abstraction would be both cheaper and faster.

Three years ago, every new workload that arrived at our platform engineering intake meeting started with the assumption of Kubernetes. Now, roughly 40 percent of new workload requests are explicitly asking for a container runtime without Kubernetes — they want Fargate or Cloud Run or just a VM with a container agent. That shift happened without anyone making a top-down policy change.— A platform engineering director at a Fortune 500 financial services firm, in a briefing with TechReaderDaily

The post-Kubernetes landscape is not a single technology but a spectrum of simpler abstractions that share one property: the container remains, but the orchestrator vanishes from the developer's line of sight. Google Cloud Run, which celebrated its seventh year in production in 2025, now runs a meaningful share of GCP's containerised workloads and is increasingly the default for new services inside Google's own customer base. AWS Fargate, as noted, continues to grow. Microsoft's Container Apps, built on Kubernetes but exposing almost none of its internals, has been the quiet success story of Azure's platform engineering push. And behind the hyperscalers, a second generation of frameworks — Dapr for distributed application runtimes, Crossplane for infrastructure composition, and KubeVela for application-level abstractions — are layering on top of Kubernetes in ways that obscure it without replacing it.

This layering pattern is significant. It suggests that Kubernetes' most durable role may not be as an application platform at all, but as a kernel — a low-level control plane that higher-level platforms target, much as an operating system kernel is essential infrastructure that few application developers interact with directly. The Cloud Native Computing Foundation, Kubernetes' own governance body, has been quietly repositioning Kubernetes along these lines over the past 18 months, emphasising its role as 'the control plane for cloud-native infrastructure' rather than as a developer-facing platform. At KubeCon Europe 2025, the hallway conversations among platform engineers were less about Kubernetes features and more about what should sit above Kubernetes — and whether that layer, not the orchestrator, would define the next generation of cloud competition.

The partner ecosystem offers a useful triangulation point. Datadog's Q1 2026 platform report, cited by multiple customers briefed to TechReaderDaily, showed that the share of containers running on managed orchestrators — as distinct from serverless container platforms — has plateaued at roughly 58 percent of containerised workloads, down from a peak of 67 percent in mid-2024. The shift is not a stampede, but the direction is consistent. Snowflake and Databricks, neither of which exposes a Kubernetes interface to users despite running enormous containerised workloads internally, represent the extreme end of the spectrum: sophisticated infrastructure that has fully abstracted the orchestrator away. Their existence alone challenges the assumption that Kubernetes is the inevitable destination for any organisation running containers at scale.

The hyperscalers are not passive observers of this trend. Each is pulling forward container revenue in different ways. AWS, in its Q4 2025 earnings call, noted that EKS Anywhere — which extends EKS to customer data centres — was now deployed at over 1,200 enterprise sites, a number that grew 40 percent year over year. That growth locks in EKS as the management pane even as the underlying workloads diversify. Google has been quietly building GKE Enterprise, a paid tier that bundles multi-cluster management, policy governance, and a service mesh — effectively charging for the complexity that the market increasingly wants to avoid. Microsoft's Azure Arc now reports over 30,000 connected customers, a number that Satya Nadella cited in a February 2026 earnings call, positioning Arc as the bridge between AKS-managed clusters and the broader Azure portfolio, including AI services.

The first signal to watch during the second half of 2026 is whether the hyperscalers begin breaking out managed-Kubernetes revenue from broader container and serverless revenue in their quarterly filings. If they do, it indicates the managed-K8s segment has become large enough to matter to investors on its own. If they do not, it may signal reluctance to draw attention to a business line that is being strategically de-emphasised. A second signal is pricing: Google has already made GKE Autopilot free of management charges, billing only for the worker resources consumed. If AWS or Microsoft follow suit, it confirms that managed Kubernetes has become a loss leader for higher-value services — AI platforms, data, and machine learning — rather than a profit centre in its own right.

The third and most telling signal will come from the CNCF itself. The foundation's 2026 annual survey, expected in December, will for the first time track 'platform engineering platforms' as a distinct deployment category alongside managed Kubernetes. If that category shows double-digit adoption growth — and early preview data shared with CNCF ambassadors suggests it will — the narrative will shift definitively. Kubernetes will not go away. Its install base is too large, its ecosystem too embedded, and its certification programme too entrenched. But the centre of gravity will have moved up the stack. The hyperscaler that understands this first — that stops competing to manage Kubernetes better and starts competing to abstract it away more elegantly — will capture the next $50 billion of cloud platform revenue.

Watch for Google's announcement cycle at Google Cloud Next in October 2026. The company that gave the world Kubernetes is also the company with the most to lose if the managed-K8s market commoditises, and the most to gain if it can define the post-Kubernetes abstraction layer first. A quiet line in a keynote — the kind that most attendees miss and most reporters ignore — about a new serverless runtime, a GKE-free application model, or a pricing change that makes the cluster optional, will tell you more about the next five years of cloud than any market-share chart. The long game is, as always, one sentence long.