EU AI Act Implementing Act Deadlines Reset by Omnibus Deal

The EU AI Act's provisional Omnibus agreement on 8 May recalibrates compliance deadlines, yet the Commission's incomplete guidance pipeline may still hinder deployers and SMEs.

vosu.ai

vosu.ai

In this article

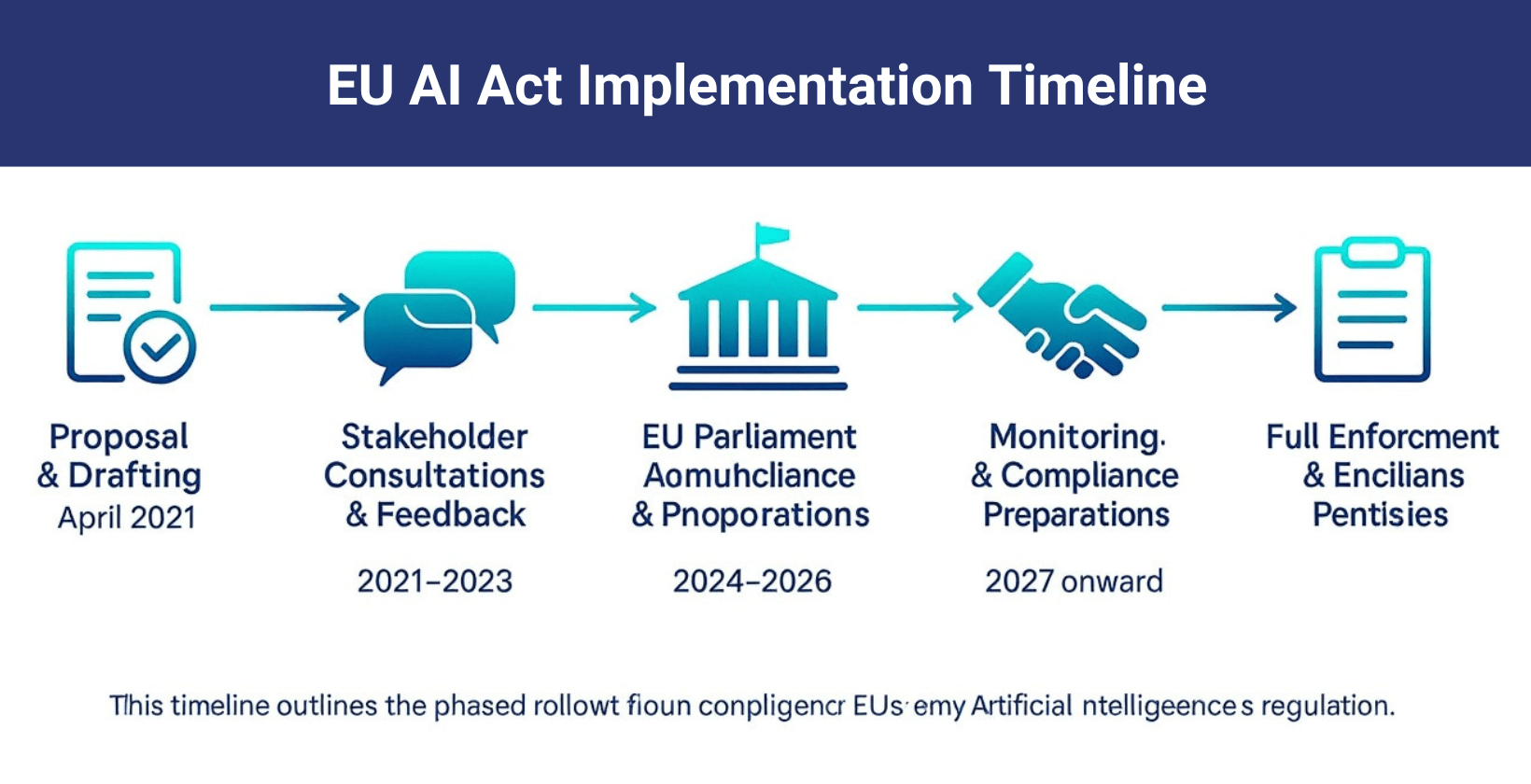

On Thursday, 8 May 2026, shortly after 07:00 Brussels time, the European Parliament and the Council of the European Union concluded a marathon trilogue negotiation with a provisional agreement on the Digital Omnibus on AI, the legislative package that amends and simplifies key provisions of Regulation (EU) 2024/1689, the EU AI Act. The deal, reported first by Reuters correspondent Foo Yun Chee and confirmed by the Commission shortly afterward, rewrites the compliance calendar that thousands of companies have been building toward since the Act was published in the Official Journal in July 2024. For the policy professionals tracking this file, the 8 May agreement is the pivot point around which every implementing act, delegated act, and national transposition deadline will now turn.

The Omnibus package, formally tabled by the Commission in February 2026 under the 'simplification and burden reduction' mandate, makes seven principal changes to the AI Act's operative text, as detailed in a JD Supra analysis published by Orrick, Herrington & Sutcliffe LLP on 9 May. The headline shift is the postponement of the compliance deadline for developers of high-risk AI systems classified under Annex III, which had been set for 2 August 2026. That date now moves to 31 December 2027. A further extension, to 2 August 2028, applies to AI systems embedded in products governed by Union harmonisation legislation, including the Machinery Regulation, the Medical Devices Regulation, and the Toy Safety Directive. Providers of general-purpose AI models, meanwhile, see their watermarking and transparency obligations slide from August 2026 to 2 November 2026.

The machinery-rules overlap has been one of the most consequential technical issues in the file. Under the original AI Act text, a manufacturer placing a machinery product on the market that incorporated an AI safety component classified as high-risk could face dual conformity-assessment obligations under both the AI Act and the Machinery Regulation (EU) 2023/1230. The Omnibus agreement resolves this by inserting a clear primacy clause: where a product falls within the scope of a sectoral harmonisation regulation, the conformity assessment under that sectoral legislation is deemed sufficient to address the AI Act's requirements, provided the sectoral rules cover the same risk profile. Jedidiah Bracy of the IAPP reported on 8 May that this clarification alone removes a compliance duplication that would have affected an estimated 40 percent of Annex III high-risk use cases in the industrial sector, according to Commission internal estimates shared with the Parliament's Committee on the Internal Market and Consumer Protection (IMCO).

For deployers of AI systems, as distinct from providers, the calendar remains perilously tight in certain respects. The deployer obligations under Article 29, which require entities using high-risk AI systems to implement human oversight measures, retain relevant documentation, and conduct fundamental-rights impact assessments where applicable, still point to 2 August 2026 for Annex III systems placed on the market before that date. An IAPP analysis published on 7 May found that small and medium-sized enterprises in particular face acute evidence gaps: 78 percent of surveyed SMEs had not yet completed the required inventory of AI systems by April 2026, and fewer than one in five had designated a responsible person as mandated by Article 27. The Omnibus deal does not postpone the deployer deadline; it only moves the provider-side obligations for new systems. Deployers of existing Annex III systems still face the original August date, a nuance that has received far less press attention than the headline deadline shifts.

The parliamentary path to the 8 May agreement was anything but smooth. On 26 March 2026, the European Parliament voted by a margin of 471 to 97 to adopt a first-reading position that both delayed the high-risk deadlines and inserted a ban on AI systems designed to create non-consensual intimate imagery, the so-called nudification apps. Evan Schuman, writing in Computerworld, captured the CIO-level conundrum the March vote created: 'rush to comply, or wait and risk non-compliance.' The Parliament's position then entered trilogue negotiations with the Council, where it immediately encountered resistance from member states, led by Germany and France, who sought broader exemptions for industrial AI and argued that the machinery-regulation carve-out should be automatic rather than risk-assessed.

Those trilogue talks collapsed in the early hours of 30 April 2026 after twelve hours of negotiation, as The Next Web reported the same day. The central sticking point was product scope: the Parliament's rapporteur, MEP Brando Benifei (S&D, Italy), insisted that any exemption for machinery-embedded AI must be subject to a case-by-case determination by the relevant notified body, while the Council preference, articulated by the Polish Presidency, was for a blanket exemption. The April failure pushed the file into a May negotiation window that many observers considered the last viable opportunity before the original 2 August Annex III deadline rendered parts of the Omnibus debate moot. Had talks dragged into June, the legal uncertainty for providers facing the original deadline would have become unmanageable, a point repeatedly made by IMCO committee chair MEP Anna Cavazzini (Greens/EFA, Germany) in committee sessions throughout April.

The breakthrough on 8 May came through a compromise on the machinery question: the text now provides that AI systems subject to third-party conformity assessment under a sectoral regulation are exempt from the AI Act's standalone conformity assessment, but only where the sectoral regulation explicitly addresses the same AI-specific risks. Where it does not, the AI Act's requirements apply in parallel. This formulation preserves the principle of single assessment while retaining a backstop that the Parliament's legal service considers consistent with the New Legislative Framework for product regulation. The co-legislators also agreed to require the Commission, by 2 February 2027, to publish a correlation table mapping which sectoral regulations address which AI risks, providing legal certainty before the December 2027 deadline arrives.

The Implementing-Acts Pipeline: Where the Power Now Sits

Beneath the political agreement on the Omnibus lies a quieter but equally consequential story about the implementing acts and delegated acts that the Commission is empowered to adopt under the AI Act. The regulation delegates to the Commission the authority to specify, through implementing acts adopted via the comitology procedure, the technical documentation requirements for high-risk AI systems (Article 11), the format of the EU declaration of conformity (Article 48), and the operational details of the EU database for stand-alone high-risk AI systems (Article 71). Through delegated acts, the Commission can amend the list of high-risk use cases in Annex III (Article 7) and update the technical documentation requirements in Annex IV to reflect technical progress. None of these secondary instruments have yet been published in final form; most remain at the draft stage within the relevant Commission directorates-general.

The calendar for these instruments is governed by the comitology process, a framework that gives member-state representatives, sitting in committees chaired by the Commission, the power to approve or reject draft implementing acts. Under the examination procedure of Regulation (EU) 182/2011, the Commission submits a draft implementing act to the relevant committee, which delivers an opinion by qualified majority. If the committee delivers a negative opinion, the Commission cannot adopt the act. If no opinion is delivered, the Commission may adopt the act except in specific circumstances. The AI Act's implementing acts are spread across several comitology committees: the AI Committee established under Article 74, which is the primary venue; the Committee on Standards and Technical Regulations for some technical specifications; and the relevant sectoral committees for machinery, medical devices, and toys where overlap exists. Each committee has its own meeting calendar, and each meeting requires a minimum notice period of 14 calendar days.

Commission desk officers indicate that the AI Committee has held three preparatory meetings since January 2026, with draft texts circulating for the technical documentation template and the conformity declaration format. A formal submission to the committee under the examination procedure is expected before the summer recess, with adoption possible by October 2026. The delegated act on Annex III amendments, however, faces a longer timeline; the Commission is required to conduct an impact assessment before proposing changes to the high-risk list, and that assessment is not expected to conclude before the first quarter of 2027. This sequencing means that companies preparing for the December 2027 provider deadline may not know until mid-2027 whether the AI systems they are developing remain classified as high-risk.

The SME evidence gap documented by the IAPP is in part a function of this missing guidance. A Croatian regulatory advisory firm, Vision Compliance, published a readiness report on 1 April 2026 finding that 78 percent of enterprises surveyed were unprepared for their obligations, a figure that the firm's managing director attributed to 'the absence of finalised Commission guidance on what constitutes adequate technical documentation.' The AI Act's text requires providers to draw up technical documentation that demonstrates compliance 'in a clear and comprehensive manner,' but without the Commission's standardised template, the evidentiary threshold remains a matter of interpretation. National data protection authorities, who will enforce the deployer provisions in many member states, have not yet issued coordinated guidance on the intersection of the AI Act's documentation requirements and the General Data Protection Regulation's (GDPR) data protection impact assessment framework, adding a further layer of legal uncertainty for deployers running AI systems that process personal data.

The agreement is a pragmatic reset of a timeline that was becoming unworkable. But it does not reduce the compliance burden; it only extends the runway.Ashley Casovan, Managing Director, IAPP AI Governance Center

The Omnibus agreement also introduces a new prohibited-practice category: AI systems designed or modified to generate non-consensual intimate or sexualised imagery of natural persons, commonly referred to as nudification tools. The prohibition, which will be inserted as a new subparagraph in Article 5, does not apply to AI systems with effective safety measures that prevent users from creating such images, a formulation that places the burden on providers to demonstrate the adequacy of their content safeguards. The provision was added largely in response to the public outcry that followed the proliferation of AI-generated sexualised deepfakes on social media platforms in early 2026, an episode that saw several MEPs, including Benifei and Cavazzini, call for urgent legislative action. The ban enters into force 20 days after the Omnibus amending regulation is published in the Official Journal, which is expected before the August recess.

The nudification ban, while politically popular, has raised enforcement questions that the text does not fully resolve. The AI Act's enforcement architecture relies on national market surveillance authorities designated under Article 70, but intimate-imagery AI tools are frequently distributed via online platforms hosted outside the Union. The Digital Services Act (Regulation (EU) 2022/2065) provides a complementary enforcement pathway through its obligations on intermediary service providers, but coordination between the AI Act's market surveillance authorities and the Digital Services Coordinators designated under the DSA remains governed by a non-binding Commission recommendation rather than a legally enforceable cooperation mechanism. Several member states, including the Netherlands and Ireland, have pressed the Commission to propose a binding coordination instrument before the ban takes effect.

The international dimension of the calendar resets is beginning to attract attention from comparative-law scholars tracking the Brussels Effect. The original 2 August 2026 deadline for high-risk AI had been synchronised, more or less deliberately, with the implementation timelines of several third-country AI regulatory frameworks, including South Korea's AI Basic Act and Brazil's AI regulation bill (PL 2338/2023), both of which reference the EU AI Act's risk-classification structure. The shift to December 2027 creates a misalignment that, according to academic comparativists at the University of Amsterdam's Institute for Information Law, may accelerate the tendency of third-country regulators to adopt the EU's framework in form while diverging in enforcement pace, a pattern already visible in the ASEAN AI governance guide published in February 2026.

The next procedural step on the calendar is the formal adoption of the Omnibus amending regulation by the Parliament and the Council, which is expected to occur through a simplified written procedure before the end of June 2026, avoiding the need for a full plenary vote. The regulation will then be published in the Official Journal, likely in July, and will enter into force on the twentieth day following publication. Between now and then, the Commission is expected to move forward with submitting the first tranche of implementing acts to the AI Committee under the examination procedure. The date to watch is 15 October 2026, the last scheduled meeting of the AI Committee before the original watermarking deadline takes effect on 2 November. If the technical documentation template and conformity declaration format are not adopted by that meeting, companies face the prospect of complying with obligations whose evidentiary standards remain undefined. For the policy professionals in Brussels, Luxembourg, and in-house compliance teams across the Union, the summer of 2026 is not a recess; it is the last clear stretch of road before the regulatory machinery begins to move.