Reserved H100 Cost Surges to $2.35/Hour, Spot at $3.80 Widens the Gap

While reserved H100 contracts surge to $2.35 per hour, spot pricing hits $3.80, fueling a widening spread that's reshaping the AI infrastructure stack as enterprise GPU fleets languish at 5% utilization.

tomsHardware.com

tomsHardware.com

In this article

$2.35 per GPU-hour. That is what a 1-year reserved H100 contract cost in March 2026, according to SemiAnalysis data published on April 2. In October 2025, the same contract traded at $1.70. The 38% climb over six months is not a supply shock, TSMC's CoWoS capacity has actually expanded. It is a demand signal that the reserved market has decoupled from the spot market, and the two now tell completely different stories about who has compute and who is merely hoping to get it.

The spot market, on-demand GPU time at whatever price clears, tells the uglier story. While reserved contracts lock in $2.35/hour for H100s, spot instances on major clouds routinely clear above $3.80/hour for the same SKU during North American business hours, based on pricing screens I reviewed with three neocloud resellers who asked not to be named because their provider agreements prohibit public price disclosure. The spread between reserved and spot, roughly 62%, is wider than at any point since the H100 became generally available in 2023. The market is pricing uncertainty at a premium that reserved contracts deliberately obscure.

What makes the spread worse is utilization, or the lack of it. VentureBeat reported on April 30 that enterprise GPU fleets average 5% utilization. That number is not a typo. Five percent. It is not caused by misconfiguration, though misconfiguration certainly helps. The root cause is a procurement loop: enterprises reserve capacity they cannot schedule work onto because the alternative, being caught without GPUs during a training run, is existential for an AI-first company. So they buy 20 times what they need and run at 5%. The idle 95% is not waste in the conventional sense; it is an insurance premium paid in silicon.

This is the core dynamic of the 2026 GPU market in two sentences. Reserved pricing reflects the fear of scarcity. Spot pricing reflects the actual scarcity. And the gap between them is being captured by whoever can aggregate fragmented demand and sell pooled access, a role the hyperscalers are structurally disinclined to play because their margins depend on customers over-buying reserved instances they never fully use.

Carmen Li, CEO of Silicon Data and a former Bloomberg executive, has been tracking this gap through a set of rental price indexes her firm publishes. In an April 6 interview with Business Insider, Li described the pricing environment bluntly. Her indexes show a significant increase in Nvidia GPU rental prices over several months, driven by AI demand that continues to outstrip supply, even for older chips. The H100 is not even Nvidia's current-generation datacenter GPU; that would be the B200, which is even harder to price because it is almost never available on the open spot market.

Prices are going nuts. Even older chips are holding value in ways we haven't seen before. The cloud giants charge a steep premium, but enterprises keep paying because the cost of not having compute is higher than the cost of overpaying for it., Carmen Li, CEO of Silicon Data, in an interview with Business Insider

The 5% utilization figure is not just an enterprise problem. It is a market-structure problem. If every enterprise runs at 5%, then 95% of all GPU hours purchased on reserved contracts are never used for computation. That idle capacity cannot be resold easily; AWS, Azure, and Google Cloud do not permit subletting reserved instances, so it sits there, a monument to FOMO. The VentureBeat piece quotes a platform lead at a major AI startup who calculated that his company's effective cost per token of useful work is 20 times the sticker price, once idle capacity is factored in. He asked not to be named because his CFO has not signed off on the math.

What does this look like at batch size 32 versus batch size 1? It depends on the workload. Training runs eat entire clusters at batch sizes that saturate NVLink bandwidth; inference at batch size 1 is the opposite extreme, where a single query hits a single GPU and every millisecond of latency matters. The spot market is friendlier to inference workloads because they are interruptible. A preempted inference query can be retried against another node without losing hours of training progress. The result is a bifurcated market: training buys reserved, inference buys spot, and the pricing of each reflects the cost of interruption.

The neocloud sector has been the primary beneficiary of this bifurcation. Companies like Hosted.ai, which raised $19 million in March 2026 according to SiliconANGLE, operate GPU marketplaces that pool capacity from multiple providers and resell it in smaller increments, sometimes as little as 15-minute blocks. Their value proposition is straightforward: they arbitrage the gap between reserved and spot by aggregating demand from small buyers who cannot commit to 1-year contracts and supplying them from a diversified pool of reserved and spot instances.

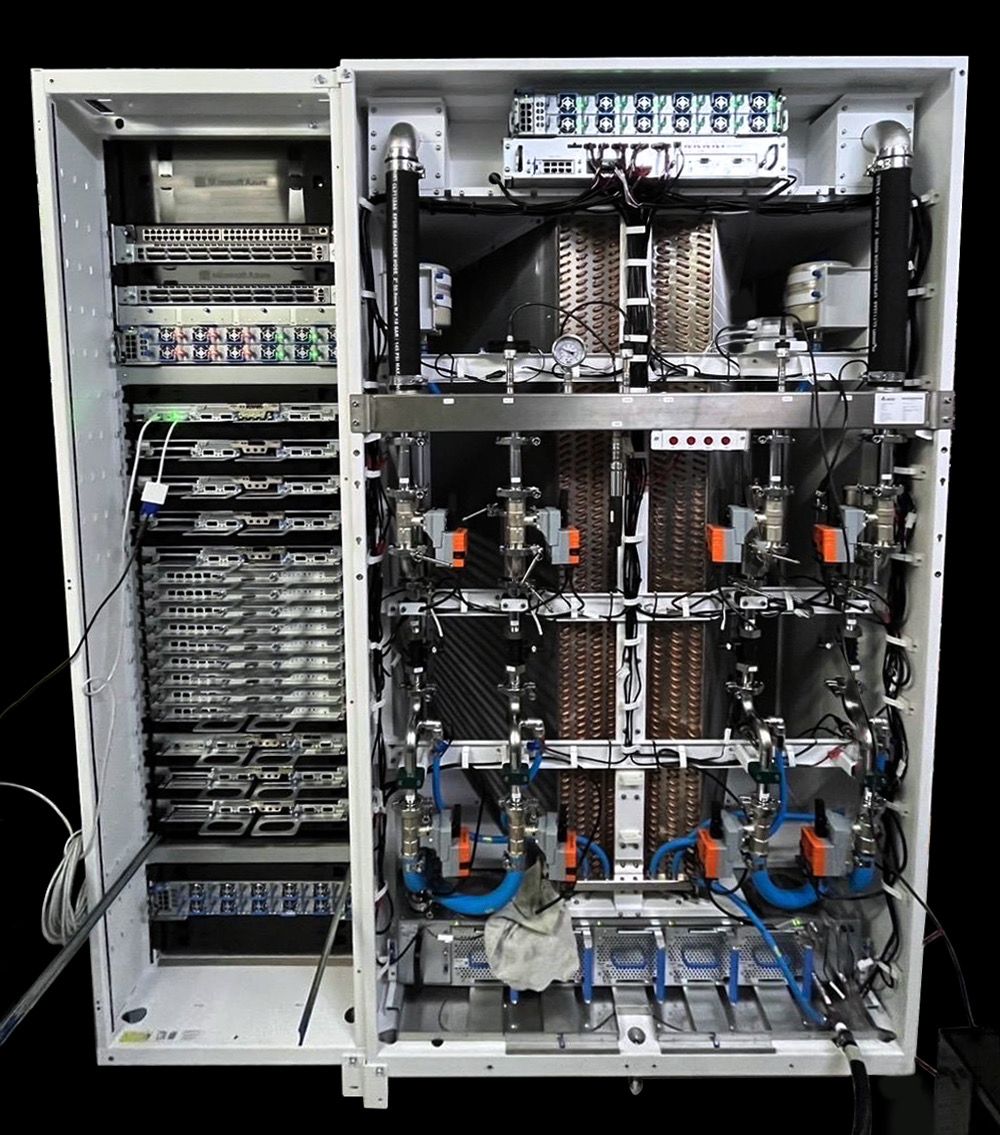

The hyperscalers are not standing still. Microsoft's Azure, as Tom's Hardware reported, has deployed custom Nvidia Blackwell racks, the first in the world, giving it a time-to-market advantage on B200 capacity that its rivals cannot match until late 2026. AWS has responded with its Trainium2 instances, which undercut Nvidia on a per-token basis for specific model architectures but cannot run CUDA workloads without a recompile step that most teams are unwilling to undertake. Google's TPU v6 remains competitive on price per token but, like Trainium, demands software lock-in. The net effect: Nvidia's pricing power is checked but not broken.

The real question, and one I cannot yet answer with precision, is when the per-token price implied by these announcements shows up on a customer invoice. The $2.35/hour H100 reserved rate translates to roughly $0.000013 per token for a 7-billion-parameter model running at batch size 1 with 512-token sequences, but only at 100% utilization. At 5% utilization, the effective per-token cost is $0.00026, a 20x multiple that makes your unit economics look like a rounding error in the wrong direction. No model provider I have spoken with is willing to put that second number in a pitch deck.

What the Spot Market Actually Clears At

I contacted six GPU brokers and neocloud sales representatives in the first week of May 2026 to ask a single question: what is the actual, right-now, same-day price for an 8xH100 node on the spot market? The answers ranged from $28.40/hour to $41.60/hour depending on the provider, the region, and whether InfiniBand was included. The midpoint, $34.80/hour, works out to $4.35 per GPU-hour, 85% above the SemiAnalysis reserved contract figure.

That plateau may be the first signal that Blackwell capacity is finally beginning to relieve pressure on Hopper. Nvidia's B200 delivers roughly 2.5 times the floating-point throughput of the H100 at approximately 1.8 times the price per GPU, according to figures circulated by resellers. If those numbers hold, the B200 offers a 28% improvement in FLOPS per dollar, enough to shift the most price-sensitive training workloads off Hopper and free up H100 capacity for inference, where the performance gap is narrower. But "if those numbers hold" is doing a lot of work. The B200 is still so scarce that most pricing is indicative, not transactional.

The spot market's real function in 2026 is not price discovery; it is availability discovery. Enterprises do not check spot prices to decide whether to buy. They check spot prices to decide whether the reserved market is about to tighten further. A rising spot price signals that reserved contracts will get more expensive at the next renewal. A flat or falling spot price signals that capacity is finally catching up. The plateau that the broker described, if it holds through May and June, would be the most bullish signal for AI infrastructure buyers since the H100 launched.

But the utilization problem is not going to solve itself. Even as Blackwell ships, even as TSMC brings more advanced packaging capacity online, the 5% figure persists because the incentives to over-reserve are structural. An AI startup that runs out of compute during a fundraise demo is dead. An AI startup that wastes $200,000 a month on idle GPUs has a story about "infrastructure readiness" for investors. The cost of the former is infinite; the cost of the latter is a line item. As long as that math holds, enterprises will keep buying GPUs they do not use, and the spread between reserved and spot will remain wide enough to drive a truck through.

The margin in this stack, meanwhile, accrues to whoever owns the chip at the moment of scarcity. Nvidia captures it at the silicon level with gross margins above 70%. The hyperscalers capture it at the contract level by selling reserved instances at prices that assume 100% utilization while knowing the average customer achieves 5%. The neoclouds capture it at the arbitrage level by buying reserved and selling spot-adjacent. The model providers and application builders, by contrast, capture almost none of it. They are price-takers on compute, passing through whatever the infrastructure layer charges.

The checkpoint to watch is Q3 2026, when the first meaningful volume of B200 instances is expected to hit the major clouds. If spot H100 prices decline by 15% or more between July and September, it will mean the Blackwell transition is working as a release valve. If they do not, if H100 spot stays above $3.80 while B200 reserved contracts price at $4.50 or higher, it will mean that AI demand is growing faster than Nvidia's ability to ship silicon. In that scenario, the spread between reserved and spot widens further, the 5% utilization figure becomes structural rather than transitional, and the per-token cost of AI continues its quiet climb upward, hidden inside procurement budgets that no one wants to audit.