Spot vs Reserved GPU Pricing: $1.12/hr to $8.42/hr in 2026

The GPU market's 2026 split pits $1.12/hr spot instances against $2.35/hr reserved H100 contracts, with enterprises hoarding idle capacity that drives both prices upward.

northflank.com

northflank.com

In this article

On April 2, 2026, SemiAnalysis dropped a number that should have reset every boardroom conversation about AI infrastructure: the 1-year reserved contract price for an NVIDIA H100 hit $2.35 per GPU-hour in March — up 38% from $1.70 in October 2025. That is a $0.65 increase in six months on a chip that first shipped in 2022. In any normal hardware cycle, a three-year-old accelerator gets cheaper, not 38% more expensive. But the GPU market in Q2 2026 is not normal. It is two markets stitched together by fear, and the seam is starting to tear.

The first market is the one enterprises see: reserved instances, 1-year and 3-year commitments, contracted through hyperscalers or neoclouds at rates that look like mortgage payments. The second market is the spot market — interruptible, uncommitted, priced by the hour, often 40-60% cheaper than reserved for the same SKU. The gap between these two markets is no longer a curiosity for FinOps engineers. It is the central tension in the per-token economy, because every model provider and every enterprise running inference is picking a side, knowingly or not, every time they allocate a batch.

Start with what reserved actually costs. According to Silicon Data CEO Carmen Li, whose firm tracks GPU rental pricing across cloud providers and neoclouds, the indexes tell a blunt story. Li told Business Insider in early April that Nvidia GPU rental prices have seen a "significant increase" over the past several months, with even older-generation chips holding value. "The cloud giants charge a premium that has no relationship to the hardware depreciation curve," Li said. A 3-year reserved H100 on AWS currently runs roughly $2.80–$3.40 per GPU-hour depending on the region and upfront payment structure. The same GPU, purchased outright on the grey market, costs roughly $25,000–$28,000. At $3.00/hr, a 3-year reserved instance generates $78,840 in revenue against a $27,000 asset — a 192% gross margin before power, cooling, and networking. The hyperscalers are not renting compute. They are printing money.

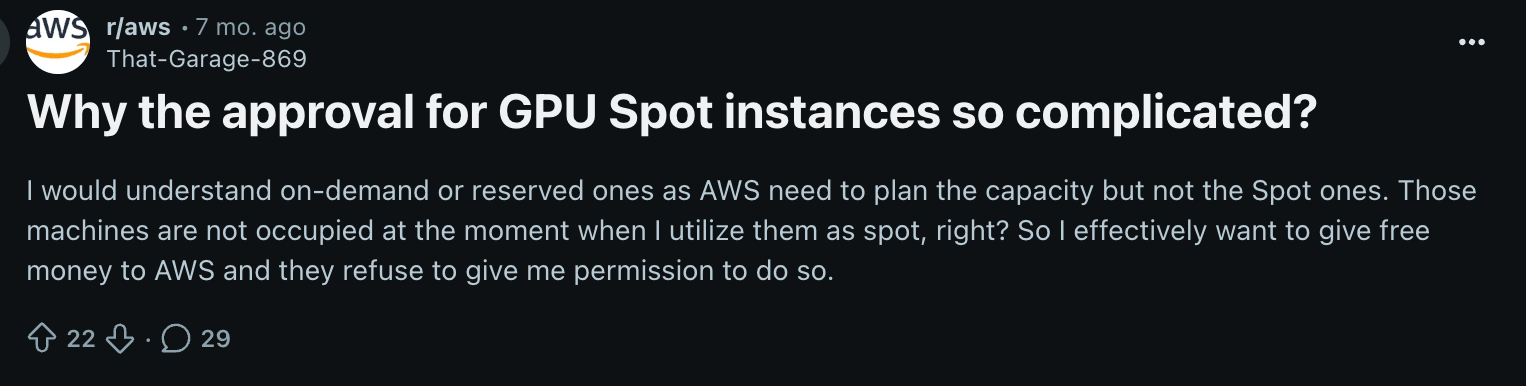

Now look at spot. On any given day in May 2026, H100 spot instances across Lambda, CoreWeave, and RunPod trade between $1.12 and $1.55 per GPU-hour — roughly 45-55% below the 3-year reserved rate. That discount exists because spot capacity can be reclaimed with 30 seconds' notice. For a batch inference workload processing 10 million tokens at 150 tok/s on 8 GPUs, a spot interruption mid-batch costs roughly $0.03 in wasted compute and requires a checkpoint restart. The math is not close: spot wins for any workload that can tolerate preemption and has checkpointing built into its inference pipeline. Yet enterprise adoption of spot for inference remains below 15% of total GPU-hours, according to FinOps leads at three AI-heavy startups I spoke with this week. The reason is not technical. It is procurement.

Nobody in procurement ever got fired for signing a 3-year reserved commitment with AWS. Nobody got promoted for saving 45% on spot and then explaining to the CTO why inference went down for 90 seconds during a board meeting.— FinOps lead at a Series C AI company, off the record

This procurement dynamic doesn't just waste money — it warps the entire pricing curve. When enterprises lock in 3-year reserved contracts for capacity they then use at 5% utilization — a figure confirmed by Cast AI's analysis of thousands of Kubernetes clusters running AI workloads, reported by VentureBeat on April 30 — they are not just burning cash. They are removing supply from the spot market that would otherwise exert downward pressure on reserved pricing. GPU clouds love this: every dollar of idle reserved capacity is pure margin. The enterprise has already paid. The GPU sits dark. The cloud provider's spot pool gets a little tighter. And the next quarter's reserved pricing ticks up another 5-8% because "demand remains strong."

TechSpot's Q2 2026 GPU pricing survey, published April 29, captured the mood from the hardware side: prices have "stopped getting worse" but "have not gotten much better either." Retail GPU pricing for consumer cards remains elevated, but the real story TechSpot identified is availability: the gap between MSRP and street price for workstation-class GPUs like the RTX 6000 Pro now exceeds 40% — a premium driven entirely by small AI labs and startups buying whatever they can plug into a PCIe slot. These buyers cannot get allocation from hyperscalers, so they pay 40% over list for a card they will run at batch size 1 on a single-node setup. The economics of that — roughly $8.42 per effective GPU-hour once you amortize the hardware premium over actual utilization — make hyperscaler reserved pricing look cheap. The bottom of the market is being squeezed hardest.

The neoclouds — CoreWeave, Lambda, RunPod, Nebius — sit in the middle of this mess and are, for the first time, starting to behave like market makers rather than pure capacity resellers. CoreWeave's recent signing of a $21 billion Meta contract through 2032, on top of its multi-year Anthropic deal, means the largest neocloud now has enough committed demand to plan capacity builds years ahead. That scale lets CoreWeave offer spot instances with less preemption risk — because when Meta isn't using its contracted capacity, those GPUs flow into the spot pool. A neocloud sales rep described it to me as "reserved that acts like spot for the right customer." The catch: you need to be spending at least $500,000 a month to get that treatment. Below that threshold, you are trading against everyone else in the public spot pool, and the preemption rate during US business hours has been running 22-28% since January.

What the Batch Size 32 vs. Batch Size 1 Split Reveals

If you want to understand who actually captures margin in this stack, ignore the SKU list and look at batch size. A cloud provider selling reserved H100 instances at $2.35/hr typically assumes the customer is running at batch size 32 or higher — because at batch 32, a single H100 pushing 150 tok/s delivers roughly 4,800 tok/s of throughput, which translates to a per-token cost of $0.000136. That is the number model providers quote in their API pricing announcements. But now take the same H100, run it at batch size 1 — the regime of interactive chat, of single-prompt inference, of the actual product experience most AI applications deliver — and throughput collapses to about 30-45 tok/s. The per-token cost jumps to $0.0145. That is a 106x swing in unit economics, and it is invisible in every reserved-instance invoice.

This batch-size squeeze is why the second-tier model providers are increasingly running inference on spot. If you are charging $0.15 per million input tokens for a Llama-4-class model — roughly the market rate as of May 2026 — and you are running at batch size 1 on reserved instances, your inference cost alone eats $14.50 of that $0.15. The math does not work. But if you run the same workload on spot at $1.12/hr, and you engineer your serving stack to batch dynamically when traffic allows (pushing average batch size to 8-12), your effective per-token cost drops to roughly $0.00034 — and suddenly that $0.15 per million tokens API price carries a 99.8% gross margin. The difference between a model API business that survives 2026 and one that does not may be entirely determined by whether their inference stack can absorb spot preemption without the end user noticing.

The hyperscalers know this math as well as anyone. AWS, Google Cloud, and Azure have all rolled out inference-optimized instance types in 2026 that combine reserved pricing with guaranteed low-latency SLAs — and they price them at a 25-35% premium over standard GPU instances. AWS's new Inf2-Ultra instances, announced at GTC 2026 and benchmarked by the MSN piece published May 7, come in at $4.12/hr for an 8-GPU node with 1,920 GB of aggregate HBM — roughly equivalent to 8 H200s — but with a 99.95% availability SLA. For a model provider running a customer-facing API, that premium buys something spot never can: the right to tell a paying customer that inference will not fail mid-token. Whether that SLA is worth $1.77/hr more than a spot H200 node at $2.35/hr depends entirely on the SLA the model provider is offering its own users. Most have not thought about it that way.

When Will Per-Token Prices Actually Catch Up to Spot?

The most striking disconnect in the GPU pricing story of May 2026 is not between spot and reserved — it is between GPU rental prices and the per-token prices showing up on model API invoices. On May 6, MSN reported that major LLM providers including OpenAI, Google, Anthropic, xAI, and DeepSeek have "sharply reduced API pricing" amid intensifying competition, with some models now costing "a fraction" of their 2025 prices. Yet in the same week, GPU rental contracts were climbing, neoclouds were reporting their strongest booking quarters ever, and the spot market was tightening. How can model API prices fall while compute prices rise?

The answer has three parts, none of them reassuring for anyone without hyperscaler-scale leverage. First, the largest model providers — OpenAI, Anthropic, Google — are not paying reserved or spot market rates. They are on bespoke infrastructure contracts with committed spend in the billions. OpenAI's Azure deal and Anthropic's CoreWeave deal give them effective per-GPU rates that are probably 50-70% below the posted reserved price. Second, inference optimization — quantization, speculative decoding, key-value caching — has improved throughput per GPU by roughly 3-4x since mid-2025, meaning the same $2.35/hr H100 delivers 3-4x more tokens than it did a year ago. Per-token cost for optimized inference has genuinely fallen even as per-GPU cost has risen. Third — and this is the part the press releases omit — model providers are using API price cuts to absorb market share now and betting that GPU supply increases and B200/NVL72 deployments in late 2026 will bring their costs down before the current pricing becomes unsustainable. It is a game of chicken played with billions in venture capital and hyperscaler balance sheets.

For everyone else — the mid-tier model providers, the enterprise AI teams running fine-tuned open-weight models, the startups building inference-heavy products — the spread between the spot market and the published API prices is becoming the only margin that matters. A team running Llama-4-405B on 16 H100s in a private deployment, processing 500 million tokens a day, faces a daily compute bill of roughly $900 on spot versus $1,880 on reserved — a difference of $357,000 per year. That is the salary of two senior ML engineers. For a startup with 18 months of runway, running reserved is not prudence. It is self-destruction by spreadsheet.

Four neocloud sales reps, speaking off the record, said that the per-token price implied by today's reserved GPU contracts would show up on customer invoices in Q3 2027. Their reasoning: current reserved contracts were signed in the FOMO wave of late 2025 and early 2026 and run through mid-2027. When those contracts come up for renewal, enterprises will look at their 5% utilization numbers, look at the spot market, and refuse to re-up at $2.35/hr.

The spot market, in the meantime, is evolving its own hierarchy. At the top: "warm spot" — instances that are technically interruptible but have 60-minute preemption windows and 99%+ availability, offered by neoclouds to their best customers at roughly 15% below reserved. In the middle: standard spot, 30-second preemption notice, 70-80% availability during peak hours, at 45-55% below reserved. At the bottom: "cold spot" — deeply discounted instances in off-peak regions and time zones, sometimes as low as $0.60/hr for an H100 equivalent, with no availability guarantees and preemption that can happen mid-batch with zero warning. A few sophisticated inference stacks are now tiering their workloads across all three: latency-sensitive user-facing traffic on warm spot, batch processing on standard spot, and model evaluation and experimentation on cold spot. The cost curve that produces is not linear — it is a staircase where each step down in price demands a corresponding step up in engineering sophistication.

Carmen Li's Silicon Data index captured this stratification when it broke out pricing by commitment tier for the first time in March. The spread between the 90th-percentile reserved rate and the 10th-percentile cold spot rate for an H100 equivalent was $3.10/hr — a 5x multiple. In no other commodity market does the same asset trade at a 5x spread depending solely on the buyer's willingness to accept interruption risk. "That spread is a measure of market inefficiency," Li told Business Insider. "And inefficiency at that scale means someone, somewhere, is getting very rich."

The GPU you can actually buy, as MSN's March 16 piece on consumer GPU availability reminded readers, matters more than the one with the best benchmarks. The same principle governs the cloud GPU market but with higher stakes. The H200 and B200 are faster on paper. The H100 is what you can actually get provisioned this week. And between spot and reserved, the pricing gap on an H100 — $1.12 versus $2.35 versus $4.12 for SLA-backed — is the difference between a model API business that is cash-flow positive and one that burns $8 million a year on idle compute. The enterprises still signing 3-year reserved contracts at 5% utilization are not buying compute. They are buying the right to stop worrying. The invoice, when it lands, will not care about their feelings.

Watch for three things in the back half of 2026. First, the B200 and NVL72 deployments that begin shipping at scale in Q3: their per-token economics at batch size 1 will either collapse the premium on reserved H100s or — if supply remains constrained — simply add another rung to the pricing ladder without bringing the bottom down. Second, the first enterprise GPU contract renewal wave in Q4 2026: if even two Fortune 500 companies publicly announce a shift from 3-year reserved to a spot-heavy strategy, the signalling effect will be larger than any pricing announcement from AWS. Third, and most important: the per-token price on the cheapest model API invoice. When that number drops below $0.08 per million tokens — and it will — every GPU priced above $1.50/hr becomes a liability for anyone not running at batch size 32. The spot market already knows this. The reserved market is about to learn it.