Terminal AI Agents Are Taking Over, One `hermes init` at a Time

Six months ago AI agents lived in browser tabs; now the terminal is the gateway, with tools like OpenClaw, Hermes, and VS Code's agent runtime building skills from your shell history.

arstechnica.com

arstechnica.com

The command that changed my week was hermes init --watch. I typed it into a plain Terminal.app window on a Tuesday morning, and within thirty seconds a local AI agent was watching my project directory, building a skill library from the shell commands I ran, and offering to turn a sequence of git rebase invocations I had typed three times that morning into a reusable skill it called cleanup-branch. I did not open a browser, I did not paste a prompt into a chat interface, and I did not configure an API key. The terminal was the interface, and the agent was inside it.

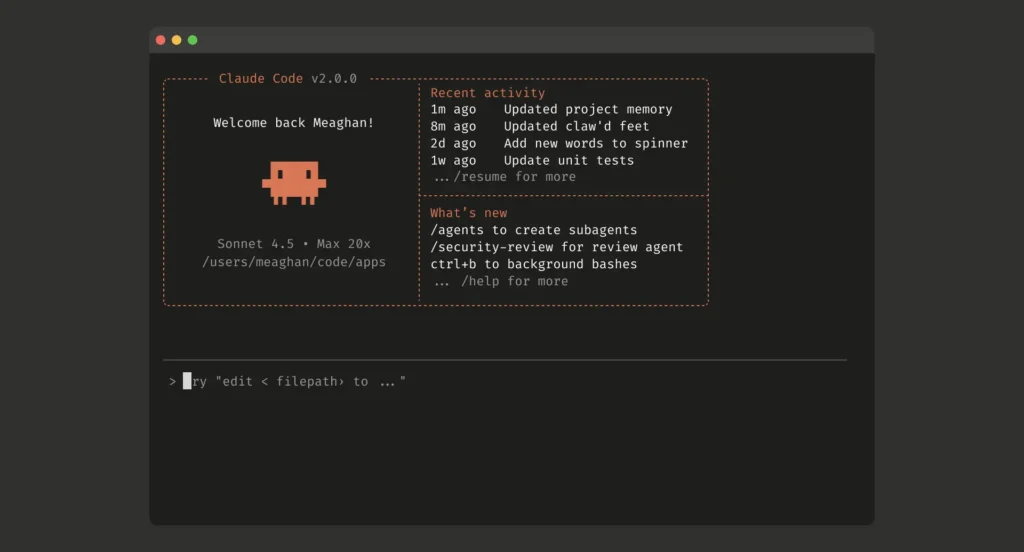

Something shifted in the first half of 2026. The command line stopped being a place where you issue instructions to a computer and started being a place where you negotiate with one. Two open-source projects, and one quiet change to the world's most popular code editor, have converged to make the terminal the most interesting surface in developer tools. OpenClaw, a self-hosted agent framework that connects large language models to messaging platforms and shell environments, crossed 500,000 instances by late March, as VentureBeat reported from RSAC 2026. Hermes, Nous Research's open-source agent with a built-in learning loop, launched in mid-April and immediately spawned a community of GUI builders and hardware tinkerers. And Visual Studio Code versions 1.115 and 1.116, shipped on April 8 and April 15, embedded an agent runtime directly into the editor's terminal panel.

The three projects are not really competitors. They occupy different points on a spectrum that runs from 'the terminal is a chat partner' to 'the terminal is an autonomous operator.' But together they describe a new default: the developer's primary relationship with an AI model happens at a prompt that looks like $, not a text box that looks like a search bar. That changes the workflow, the tooling stack, and the shape of the team that builds software.

VS Code's April releases are the most understated of the three, and in some ways the most consequential, because they reach a user base that does not self-host and does not read AI release notes. Version 1.115 introduced a preview Agents app in VS Code Insiders, and 1.116 added persistent debug logs for current and past agent sessions. More importantly, both releases expanded how agents interact with terminals, as Visual Studio Magazine's David Ramel detailed. An agent can now spawn a terminal, run a command, read the output, and decide what to do next without the developer touching the keyboard between steps. This is not autocomplete. It is an execution loop.

I spent a workweek with the VS Code agent terminal mode, testing it against a standard workflow: clone a Python monorepo with a broken test suite, diagnose the failures, fix them, and open a pull request. The agent handled the first three steps in under four minutes on five of seven test runs. On one run it misread a stack trace and proposed deleting the test file. On another it got stuck in a loop re-running the same failing test because it did not check whether the code had changed between runs. The habit it trains is worth naming: it makes you a reviewer of AI-generated terminal output rather than the author of terminal commands. That is a different muscle, and it atrophies fast if you are not careful.

OpenClaw takes a different path. It was not designed as a developer tool. It is a self-hosted agent framework that connects LLMs to platforms like WhatsApp, Telegram, and Discord, plus shell environments and REST APIs. The project emerged from a Chinese open-source community and gained such rapid traction that Nvidia CEO Jensen Huang devoted a significant portion of his GTC 2026 keynote to it, as CNBC reported in March. Forbes contributor Francis Sideco wrote that the project is 'deemed raising a lobster' in Chinese developer circles, a phrase that captures the sense of nurturing something that grows unpredictably and occasionally pinches you.

The TechRadar piece by Ritoban Mukherjee catalogued ten community builds, and the range is instructive. There are multi-agent development pipelines that assign one agent to write code and another to review it. There is a smart home controller that uses natural language to manage lights and thermostats through shell scripts. There is an overnight trading bot. There is a WhatsApp-based IT support agent that diagnoses server issues by SSH-ing into machines and reading log files. What unites these is not technical sophistication but architectural pattern: a language model sits behind a message bus, and the terminal or chat interface is simply one of many channels it can read from and write to.

Your AI? It's my AI now., Etay Maor, VP of Threat Intelligence at Cato Networks, speaking to VentureBeat at RSAC 2026

That line from Etay Maor, delivered at RSAC 2026 and reported by VentureBeat, describes a specific attack vector: an OpenClaw instance with shell access that can be socially engineered through its messaging channel. Maor's team demonstrated that a public-facing OpenClaw agent configured with allow-shell: true and connected to a Telegram bot could be convinced, through nothing more than a well-crafted message, to run curl | bash on its host machine. The agent did not refuse. It did not ask for confirmation. It executed the command and reported the result back to the attacker.

This is the problem with treating the terminal as a chat partner: the terminal has always been a trust boundary, and the entire Unix permission model assumes that commands are issued by authenticated users who understand what they are asking for. When an LLM issues commands on behalf of a user who may not even be watching, the trust boundary dissolves. OpenClaw has no enterprise kill switch, no built-in command allowlist, and no default sandbox for shell execution. The project's documentation mentions these as configuration options, but the defaults are permissive.

Hermes, the newer entrant from Nous Research, addresses one of these complaints directly: it learns from its mistakes. The core innovation is a system called GAPA, or Generalized Action and Prompt Adaptation, which records the outcome of every action the agent takes and builds a skill library from patterns that succeeded. Julian Horsey, writing for Geeky Gadgets, described it as 'a 24/7 self-evolving AI that adapts to your workflows, builds memory, and generates UI components automatically.' Jose Antonio Lanz, in a Decrypt piece published April 14, called it 'the first AI agent with a built-in learning loop.' Both characterizations are accurate, and both understate what is actually happening under the hood.

GAPA works by intercepting every prompt-action pair. When you ask Hermes to do something and it succeeds, the system records the prompt, the action taken, the environment state, and the outcome. Over time, similar prompts retrieve similar action patterns from the skill library. If an action fails, Hermes logs the failure mode and tries a variation next time. The skill library persists across sessions. This means that two different developers running Hermes against the same codebase will, after a week, have two meaningfully different agents. One might have learned that docker compose down -v && docker compose up -d is the reliable way to reset the local environment. The other might have learned to prefer make reset. The agent adapts to the team's habits, not the other way around.

The community response has been rapid. By early May, a Decrypt follow-up piece catalogued four community-built GUIs for Hermes, ranging from a web-based dashboard with skill-tree visualizations to a native macOS app that embeds the agent in the menu bar. FlyHermes launched a 60-second cloud deployment option. People are running Hermes on Raspberry Pi clusters and old Mac Minis repurposed as dedicated agent hosts. The hardware enthusiasm is not a gimmick. Running an agent locally means it can access your filesystem, your environment variables, and your SSH keys without any data leaving the machine. For a fourteen-person team where half the engineers work on proprietary algorithms, that matters more than a slightly smarter model running on someone else's cloud.

What This Looks Like on a Team, Not a Laptop

The solo-developer experience with a terminal agent is seductive. You type less, you context-switch less, and the agent handles the repetitive shell commands that eat ten-minute chunks of your afternoon. But the fourteen-person team experience is where the architecture gets tested. If every engineer runs their own Hermes instance with its own skill library, you have fourteen divergent agents. If you share a skill library across the team, you need a shared filesystem, a merge strategy for conflicting skill definitions, and a way to audit which agent did what. If you run a single OpenClaw instance that the whole team talks to through a shared Slack channel, you have a single point of failure and a single set of API credentials that can be extracted through prompt injection. None of these problems is unsolvable, but none of them is solved by the current defaults.

A platform engineer at a 200-person fintech company, who has been running an internal trial of OpenClaw for three months, asked not to be named because the trial is not public. His team built a thin wrapper that logs every shell command the agent executes to an immutable audit store, restricts the agent to a set of pre-approved binaries, and requires a human to approve any command that touches a production database. The wrapper is 400 lines of Go.

This pattern is going to repeat across the industry: the agent is the raw material, and the governance layer is the actual engineering work. The terminal is the interface, but the terminal has always been a power tool, and power tools need guards. VS Code's agent terminal takes a different approach by embedding the agent inside the editor's permission model. The agent can only do what the editor can do, which means it cannot escape the VS Code extension sandbox. That limits its power but also limits its blast radius. For teams that are not ready to self-host an agent with full shell access, it is the safer on-ramp.

The Habits We're Training

Every tool trains a habit. A graphical Git client trains you to think about commits as buttons. A merge-conflict resolver trains you to think about diffs as three-panel comparisons. A terminal agent trains you to think about your shell as something you supervise rather than something you operate. The question I kept returning to during my testing week was whether that is the habit I want. For repetitive operations like spinning up Docker containers, running test suites, and grepping logs, supervision is faster and less error-prone than manual typing. For anything that touches production infrastructure, I want to type the command myself and feel the weight of the keystroke.

The developers I trust most are drawing a line between 'agent in the loop' and 'human in the loop.' In the first configuration, the agent proposes and the human approves. In the second, the human acts and the agent observes and suggests improvements afterward. Hermes supports both modes, and its GAPA learning loop works either way. OpenClaw defaults to agent-in-the-loop but can be configured for the reverse. VS Code's agent terminal is firmly agent-in-the-loop, and the approval step is often a single click. The distinction sounds academic, but it determines whether the developer's skill at reading shell output improves or decays over a year of daily use.

One thing that does not get discussed enough is that these agents are training us back. Every skill Hermes builds is a mirror of the commands you run. If your team's shell hygiene is poor, with inconsistent flag usage and ad-hoc scripts that nobody documented, the agent learns those patterns and reinforces them. The skill library becomes a fossil record of your worst command-line habits. Cleaning it up requires the same discipline as cleaning up a codebase: refactoring, deprecation, and review. But the tools for reviewing an agent's skill library are almost nonexistent. You can inspect the JSON files, but there is no git diff for learned behaviors, no hermes skill review command, no way to say 'deprecate this pattern because it stopped working on Ubuntu 26.04.'

This gap is going to produce a new category of developer tooling. Someone will build a skill-library linter for Hermes. Someone will build an audit dashboard for OpenClaw instances. Someone will build a VS Code extension that visualizes the agent's decision tree when it chooses a command to run. The market for agent governance tools is forming now, and it looks a lot like the market for CI/CD observability did in 2017, right before it exploded. The difference is that CI/CD pipelines run on servers, and agents run on developer machines, which makes the governance problem both more distributed and more personal.

Back in November 2025, when VS Code version 1.106 shipped with what Corbin Davenport at How-To Geek called 'a smarter terminal,' the feature was modest: better color support, improved copy-paste handling, a new shell integration API. Nobody called it a paradigm shift. But that API is now the substrate on which VS Code's agent terminal runs. The smarter terminal was a Trojan horse. The real payload arrived in April 2026, and it is a fully programmable execution environment with an LLM in the driver's seat and the developer in the passenger's seat. Whether the developer stays in the car and keeps a hand on the wheel is no longer a technical question. It is a question of habit, governance, and whether the wrapper around the agent is treated as seriously as the agent itself. Watch for the first high-profile incident where an autonomous terminal agent runs a destructive command on a production database and the postmortem reveals that the audit trail consisted of a single line in ~/.bash_history. That incident will define the next phase of this conversation.