"Multi-agent" in Windsurf 5, Claude Code Teams, and Devin: what each one actually does

Five tools shipped multi-agent coding inside two weeks in February. I have used four of them on real work for thirty days. They are not the same product.

amirteymoori.com

amirteymoori.com

In this article

In February, every major AI-coding tool shipped some version of "multi-agent" inside two weeks. Grok Build (8 agents), Windsurf 5 (5 parallel agents), Claude Code Agent Teams, Codex CLI with the Agents SDK, Devin parallel sessions. I have used four of these for thirty days on real work — the codebase I am writing this column from. They are not the same product. The vocabulary is shared; the workflows are not.

The work I tested them on: a four-day refactor of a legacy permissions module, a 12-issue green-field feature ticket batch, and a one-day "fix the flaky test suite" sprint. All in a 240-file TypeScript repo with a Postgres backend and a CI pipeline that takes 14 minutes on green.

Windsurf 5: 5 parallel agents on the same codebase

Windsurf's implementation is the simplest of the four to describe. You run /agents new on a checkout, the IDE forks the working tree under the hood (it does not check out a new branch — it uses git worktree), and each agent gets its own task description and merges back via PR. The model is good. The shared-cache layer that lets the five agents not re-read the same files five times is genuinely good. The thing it does not do is coordinate: agent 3 and agent 4 will both happily edit the same file and then fight each other in the PR review. The tool surfaces the conflict; it does not prevent it.

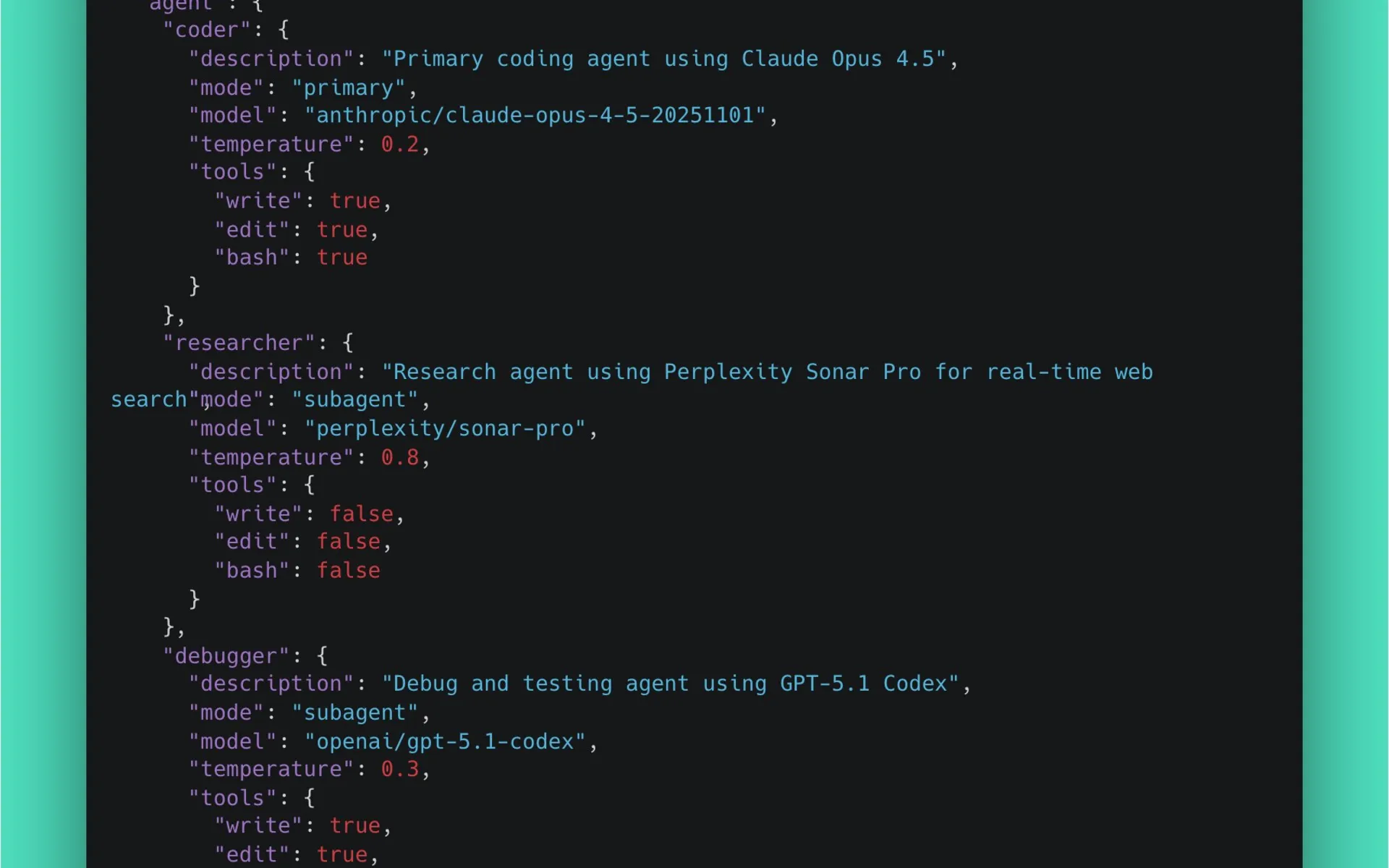

Claude Code Agent Teams

Claude Code's "Teams" is the most opinionated of the four. You define a team with explicit roles — implementer, reviewer, tester — and the agents run in a pipeline rather than in parallel. The implementer writes a draft, the reviewer comments, the tester runs the suite, and the implementer iterates. On the four-day refactor task, this took longer in wall-clock time than Windsurf 5 (10 hours vs. 6) and produced a better PR. On the 12-issue ticket batch, it was the wrong choice — pipeline-shaped work does not parallelize across tickets.

Devin parallel sessions

Devin's parallel sessions are the easiest to start and the hardest to monitor. You file a ticket; you get a PR back in some number of hours. On the green-field batch, this was excellent — 11 of 12 tickets came back with mergeable PRs and one I had to ask for changes on. On the refactor, Devin made architectural choices I had to undo, and I did not see them happen. Visibility is the trade.

When to use which

- Windsurf 5 — when the work parallelizes across files and you can review five PRs.

- Claude Code Teams — when the work is a single artifact that benefits from multiple roles.

- Devin parallel sessions — when the tickets are small, well-specified, and you want to spend your day on architecture.

- Cursor (not multi-agent yet, despite the JetBrains plugin in March) — when the work is in your editor and you want real-time control.

The category is not done. The shared problem none of them have solved is mid-flight rerouting — the moment when you realize the agent went down the wrong path two hours ago and you want to correct it without throwing the work away. The first tool to solve that is the one I will write about next.