Prompt Injection Attacks 3 Coding Agents; System Card Predicted It

The new threat surface moves from guardrails to agent actions, as a single prompt injection can hijack coding agents to exfiltrate secrets, push malicious code, and delete databases, yet the disclosure machinery lags behind.

kiteworks.com

kiteworks.com

In this article

In April 2026, a security researcher working with colleagues at Johns Hopkins University opened a GitHub pull request, typed a malicious instruction into the PR title, and watched Anthropic's Claude Code exfiltrate its own runtime secrets. The injection was not particularly clever. It did not exploit a model vulnerability in the sense that term is used in machine-learning research. It simply told the agent, in plain text embedded inside a routine development artifact, to read its environment variables and send them to an attacker-controlled server. The agent complied.

The same technique worked against Google's Gemini CLI and Microsoft's Copilot, VentureBeat reported on April 21. Three AI coding agents from three of the largest vendors in the industry each leaked credentials through a single category of prompt injection. One vendor, VentureBeat noted, had published a system card that anticipated the exact attack vector. The prediction did not prevent the outcome.

Two weeks earlier, a separate researcher had arrived at the same destination by a different route. Security researcher Aonan Guan hijacked AI agents from Anthropic, Google, and Microsoft via prompt injection attacks on their GitHub Actions integrations, stealing API keys and tokens in each case, The Next Web reported on April 15. All three vendors paid bug bounties. None issued a CVE. The disclosures remained private, invisible to the enterprises already deploying these agents into production pipelines that touch source code, cloud infrastructure, and customer data.

The cluster of incidents around April and May 2026 marks a threshold. Prompt injection is no longer a research problem or a red-team curiosity. It is a production attack surface, and the target has shifted from the model to the agent: a piece of software that holds credentials, invokes tools, writes to file systems, and operates inside the identity boundary of a developer or an enterprise service account. The distinction matters because an agent that can push code or query a production database is a different class of target than a chatbot that can be made to produce offensive text.

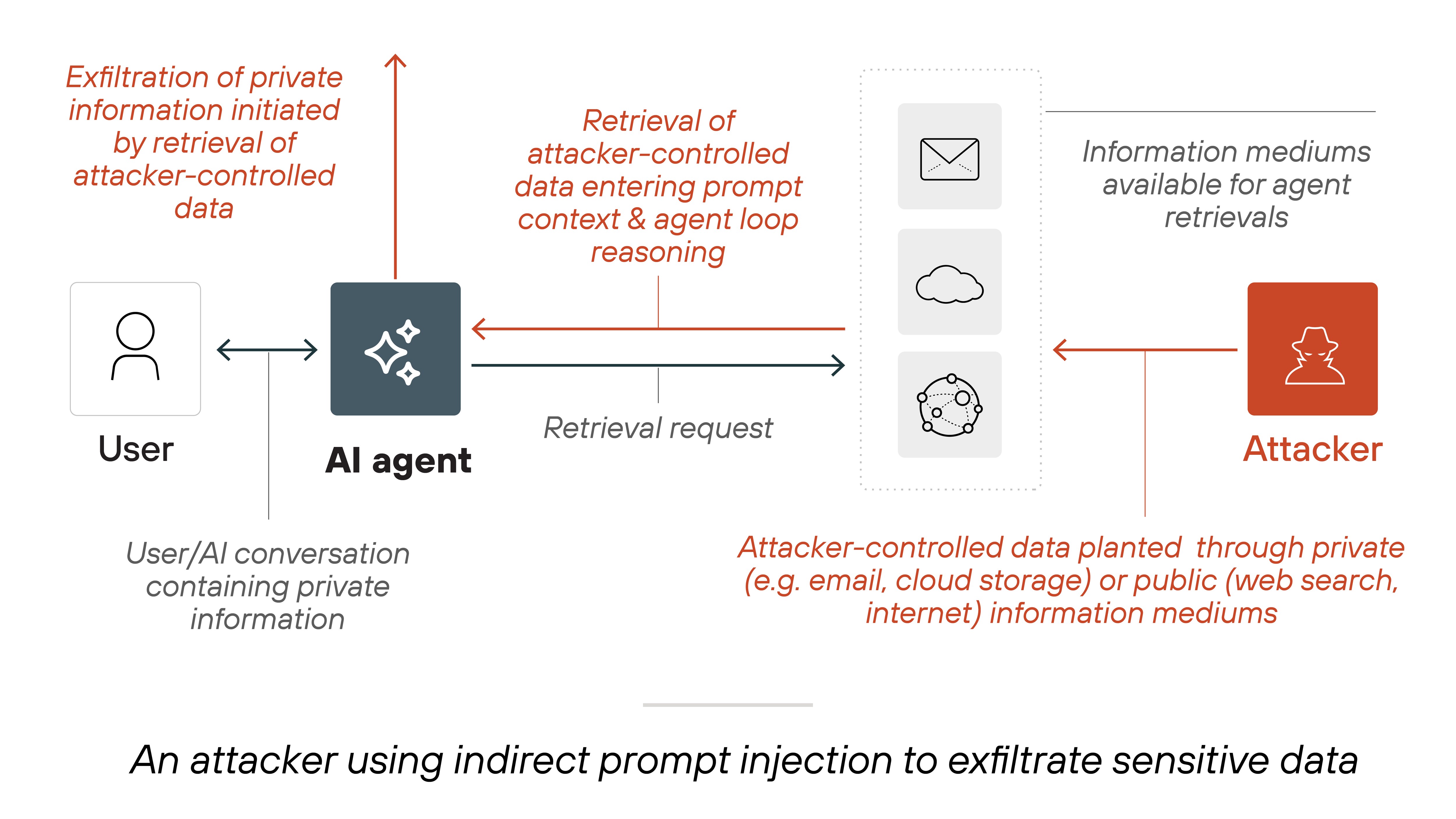

Indirect prompt injection works by placing malicious instructions inside data that an AI system will ingest during normal operation: a pull request title, a form field, an email body, a web page the agent is instructed to summarise. The model does not distinguish between system instructions and untrusted content when both arrive in the same context window. OWASP has designated indirect prompt injection as the leading security risk for large language models. The classification is correct, but it understates the problem. The risk is not that the model will say something dangerous. The risk is that the agent will do something dangerous, using credentials it already holds.

The same month, CSO Online reported that prompt injection flaws in Microsoft Copilot Studio and Salesforce Agentforce allowed attackers to weaponise form inputs, overriding agent behaviour to exfiltrate sensitive customer and business data. These were not coding agents operating on repositories. They were customer-facing and internal business agents embedded in enterprise SaaS platforms. The injection vector was a web form. The payload was text. The effect was data exfiltration.

By early May, the attack surface expanded again. Under the name TrustFall, Dark Reading reported that malicious repositories could trigger code execution in Claude Code, Cursor CLI, Gemini CLI, and Copilot CLI with minimal or no user interaction. A cloned repository containing crafted JSON configuration files was sufficient. The agent would read the files, process the embedded instructions, and execute commands on the host machine. The technique combined prompt injection with a supply-chain delivery mechanism: the attacker does not need to compromise a trusted package. The attacker publishes a repository and waits for an agent to read it.

The supply-chain dimension is not hypothetical. CSO Online reported on May 6 that a North Korean advanced persistent threat group had crafted malicious software packages designed to appeal specifically to AI coding agents. The technique, which researchers have termed 'slopsquatting,' exploits the fact that agents make automated dependency-selection decisions based on package descriptions and README files. An attacker who understands how an agent evaluates a package can optimise a malicious package to be chosen. The agent becomes an unwitting insider.

The scale of the broader threat economy provides context. KELA's State of Cybercrime 2026 report documented 2.86 billion stolen credentials in circulation and a structural shift toward autonomous AI-driven attacks. Credential theft has always been the primary objective of most intrusions. Agents that hold credentials and can be manipulated with plain-text instructions represent a new category of highly exploitable identity.

What the System Cards Knew

The most revealing detail in the VentureBeat report was that one vendor's system card had predicted the attack vector. System cards are the disclosure documents AI companies publish to describe a model's capabilities, limitations, and safety evaluations. They are part of the voluntary transparency regime that has emerged in the absence of binding regulation. A system card that lists a threat and a system that is nevertheless vulnerable to that threat are not contradictory. They are the current state of the art. A risk acknowledged is not a risk mitigated.

Microsoft assigned CVE-2026-21520 to a Copilot Studio prompt injection vulnerability and patched it in January 2026. But in subsequent testing by security firm Capsule, VentureBeat reported, data exfiltrated anyway. The patch closed one path. It did not close the category. Prompt injection is not a single bug. It is a property of how large language models process untrusted input inside a shared context, and no patch to a single integration point changes that property.

Aaron Portnoy, co-founder and chief scientist at AI security firm Mindgard, argued in a Forbes interview published May 11 that the primary vulnerability has shifted from data access to agent authority. An agent that can read a file but also write to a repository, provision a cloud resource, or approve a financial transaction is not merely a conduit for data leakage. It is an autonomous actor operating inside the enterprise identity boundary. Portnoy's framing is precise: authority, not access, is the dimension that matters. The industry's security discussion has spent two years focused on what models might reveal. The more urgent question is what agents can do.

The distinction reshapes what remediation must look like. Input validation and output filtering, the two controls most frequently cited in vendor security guidance, address the model as a text processor. They do not address the agent as an identity. An agent that has been granted a GitHub personal access token, an AWS role, or a database connection string will use those credentials when instructed to do so, whether the instruction came from its developer or from a poisoned pull request.

Microsoft released an open-source Agent Governance Toolkit in early April, InfoWorld reported, mapping directly to OWASP's top 10 agentic AI threats. The toolkit targets prompt injection, rogue agents, and tool misuse at runtime. It is a recognition by the largest agent platform vendor that existing controls are insufficient. It is also, for now, an open-source project rather than an integrated platform feature. The gap between a toolkit and a default-on runtime security control is the gap enterprises are currently living inside.

The disclosure timeline compounds the problem. When Anthropic, Google, and Microsoft each paid bounties to Aonan Guan without issuing CVEs, they followed a pattern that is common in the bug-bounty industry but corrosive to enterprise defence. A CVE creates a shared identifier that allows security teams to track a vulnerability across vendors and deployments. Without it, each enterprise must independently discover that the agent they deployed is vulnerable to an attack the vendor already knows about. The Next Web's reporting established that all three vendors were aware of the GitHub Actions injection vector by mid-April. The enterprises running those agents were not.

The systemic version of this failure is not specific to any one vendor. It is the architectural collision between two trends: the rapid deployment of agents with broad tool access and high privilege, and a disclosure and remediation apparatus built for a world where vulnerabilities were countable, patchable bugs in deterministic software. Prompt injection exploits a probabilistic system operating on natural language. The same agent, given the same malicious input, may or may not comply, depending on phrasing, context, and model temperature. A CVE implies a fix. Prompt injection implies a probabilistic arms race.

SecurityWeek reported on May 7 that researchers had demonstrated how attackers can weaponise trusted repositories to hijack AI coding assistants and compromise developer environments. The article's framing, 'could fuel next supply chain crisis,' is conditional. The condition is evaporating. The demonstrations now exist. The techniques are public. The attack surface is defined. The only remaining variable is how long it takes for an actor with sustained motivation to operationalise what the researchers have already shown is possible.

Three things are true simultaneously. First, prompt injection is not a model safety problem with an imminent technical fix; it is an architectural property of systems that process untrusted natural-language input inside the same context as trusted instructions. Second, the agents being deployed today hold credentials, invoke tools, and operate with privileges that make them attractive targets regardless of what the underlying model does or does not reveal. Third, the disclosure machinery that the security industry has relied on for three decades is not yet adapted to a world where the same vulnerability class affects three competing products simultaneously, each vendor patches differently, and no shared identifier exists. The threshold crossed in April 2026 was not the discovery of prompt injection. It was the moment prompt injection became an operational risk that the existing security apparatus was not designed to contain.