Modular Datacentre Buildouts Reshape the $1.37 Trillion AI Infrastructure Race

Manufacturing consolidation, optical-network upgrades, and cooling-first architectures are compressing deployment timelines as prefabricated facilities and substations rewrite the AI build schedule.

techzine.eu

techzine.eu

In this article

On 31 March 2026, Compu Dynamics Modular announced from its headquarters in Chantilly, Virginia, that it had acquired a majority stake in R&D Specialties, a Texas-based manufacturer of prefabricated electrical and mechanical assemblies. The deal, carried by GlobeNewswire and picked up by Business Insider, was small enough to escape wider notice. It ought not to have. Gartner had already put a number in front of the industry: $1.37 trillion, its January estimate for worldwide AI infrastructure spending in 2026, a figure representing over 54 percent of total AI spending and a 43 percent leap over 2025 levels, as reported by CRN.

The Chantilly deal is not an outlier. Eight days later, on 8 April, Mission Critical Group of McKinney, Texas, announced its own bolt-on: the acquisition of TxLa Systems, a switchgear and modular power manufacturer, expanding MCG's capacity to deliver integrated power systems for data centres on compressed schedules. These are not the transactions that dominate earnings calls. They are, however, the transactions that reveal where the physical infrastructure of AI compute is actually being assembled, and at what pace.

Between 30 percent and 50 percent of data centre development projects currently face major delays, Bisnow reported in March, citing studio and engineering sources. The bottleneck is not demand; the demand is voracious and well-catalogued. The bottleneck is the stick-built construction timeline: site grading, foundation curing, steel erection, electrical rough-in, commissioning. Each step runs sequentially, and each step has grown slower as project scale has swollen beyond anything the trades can staff.

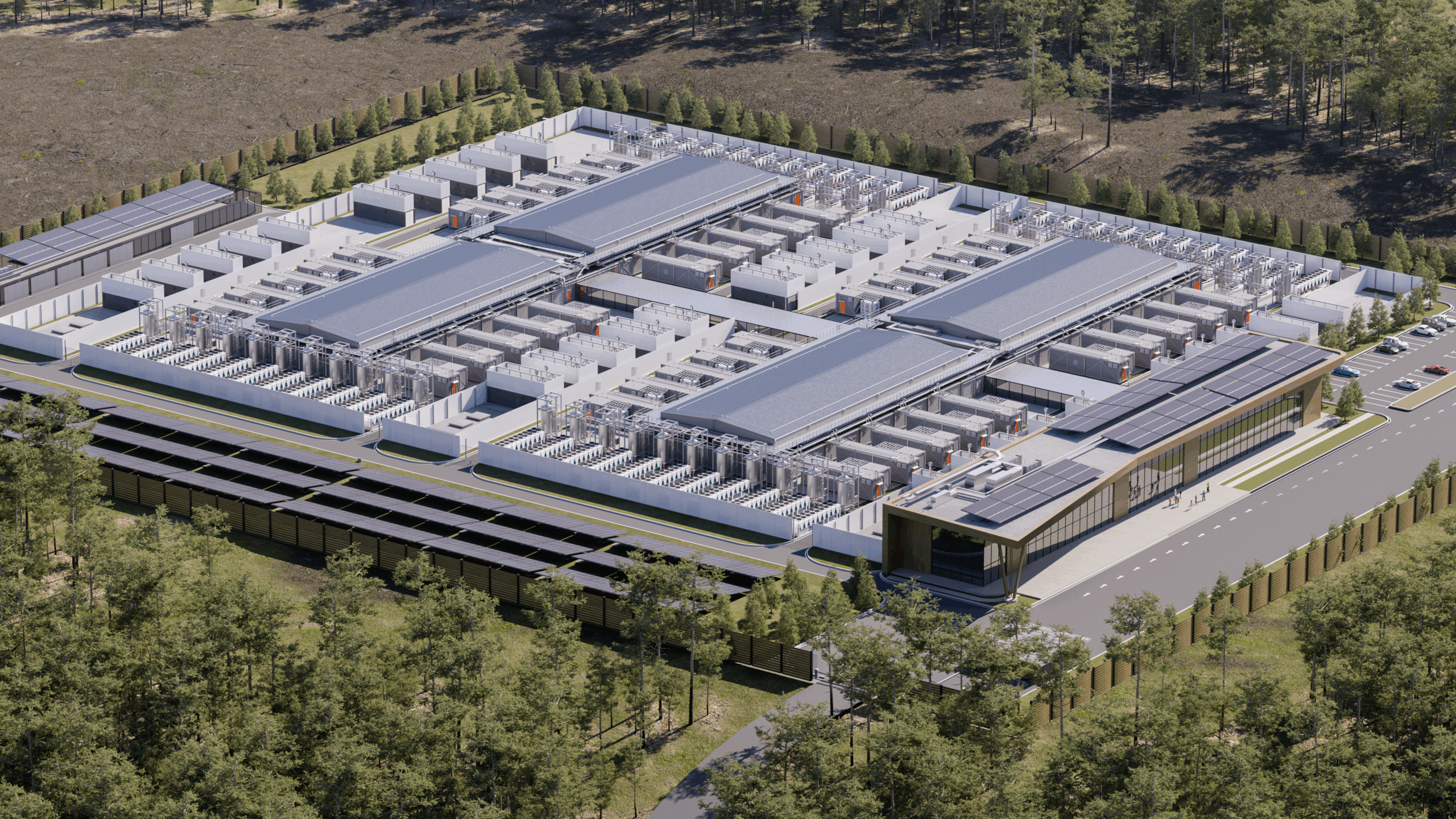

Modular construction attacks the timeline from both ends. Factory-built server rooms, power skids, and cooling modules are assembled in parallel with site preparation, then trucked to location and connected in weeks rather than months. A prefabricated 2 MW data centre unit, of the sort that DMG Blockchain Solutions received at its Christina Lake facility in British Columbia in early April, arrives on a flatbed with busbars, containment, and cooling already integrated. The site needs a pad, a utility connection, and a network feed. Everything else was bolted together under a roof in a controlled environment where quality inspection does not contend with weather.

The manufacturing capacity to produce these modules at scale is what the Chantilly and McKinney acquisitions are about. CDM's purchase of R&D Specialties brings in-house the fabrication of custom electrical assemblies, power distribution units, and mechanical frames that would otherwise sit in a six-month queue at a third-party shop. MCG's absorption of TxLa Systems adds switchgear manufacturing, the component set that stands between a utility transformer and the rows of server racks, and one of the longest-lead items in the entire data centre supply chain.

The supply chain for switchgear, in particular, has become a quiet determinant of who can build and who must wait. Lead times for medium-voltage switchgear stretched past 80 weeks in some North American markets in 2025, according to transmission planners. A modular manufacturer that owns its switchgear fabrication can cut that wait to something closer to 20 weeks. The arithmetic is simple and brutal: the company that controls its switchgear supply controls its delivery schedule.

Gartner's $1.37 trillion figure breaks down into hardware categories that are well understood: servers, storage, networking equipment, GPUs, and the racks and power infrastructure that hold them. But the CRN AI 100 list for 2026, which named 25 infrastructure and edge computing companies in April, captured something less obvious. The list includes not only the expected names, Cisco Systems, Hewlett Packard Enterprise, Lenovo, Nvidia, but also software-defined infrastructure companies such as Nutanix and data-resilience platforms like Cohesity and Veeam. The hardware buildout is pulling a software ecosystem along with it.

The edge computing slice of that list is particularly instructive. Companies such as Acer, with its Veriton GN100 AI Mini workstations powered by Nvidia's Grace Blackwell GB10 Superchip, and Cisco, with its AI-ready edge platform and Hybrid Mesh Firewall, are positioning for inference workloads that will not run in hyperscale campuses. These devices need local power, local cooling, and local connectivity. They are, in effect, micro data centres that happen to sit in a retail back office or a factory floor rather than a 200 MW campus outside Ashburn.

The network layer that connects these distributed nodes to the core is undergoing its own capacity expansion, and the numbers are beginning to surface in earnings reports. Nokia's first-quarter 2026 results, released in late April, showed a 54 percent jump in operating profit and a 49 percent surge in AI and cloud-related sales. The company raised its 2026 Network Infrastructure growth target to between 12 and 14 percent, with its Optical and IP division now expected to grow 18 to 20 percent, The Motley Fool noted in its markets coverage.

Justin Hotard, Nokia's president and chief executive, told analysts that net sales grew 4 percent to EUR 4.5 billion with an operating margin of 6.2 percent, framing the quarter as a stronger start driven by Network Infrastructure and cloud demand. The optical networking segment, which provides the high-speed interconnects between data centre buildings and between metro-area facilities, benefited directly from the sheer volume of new floor space coming online and the requirement that every square metre be reachable at line rate.

What connects the Chantilly factory floor to Nokia's optical transport bookings is a shared dependency on speed. A modular data centre that can be delivered in 16 weeks is useless if the fibre backhaul takes 18 months to permit and trench. The industry is learning that prefabrication must extend beyond the building envelope and into the network path, the power feed, and the cooling loop. Anything that cannot be modularised becomes the new critical path.

What the substation says about the schedule

For all the attention paid to server racks and GPU clusters, the binding constraint on modular deployment is increasingly the local substation. A prefabricated data centre module drawing 2 MW can be manufactured in weeks, but the transformer that steps down utility voltage to serve it remains a 52-to-80-week procurement item in most North American markets. The substation does not modularise easily. It requires site-specific engineering, utility coordination, and often a network-upgrade study that examines contingency scenarios two levels up the transmission system.

This is where the ownership question becomes acute. Who pays for the grid upgrade, and who benefits? A hyperscaler that commits to a 200 MW campus can justify the cost of a new substation and a transmission-line extension; those costs amortise across a decade of compute revenue. A modular edge deployment serving a single manufacturing plant or a regional hospital cannot. The regulatory frameworks that govern cost allocation for distribution-level upgrades were not written for a world in which data centres are the fastest-growing load class on the system.

The differential between contracted load and connected load, a distinction that utility commissioners understand intimately but that few outside the power sector appreciate, is beginning to surface in site-selection conversations. A data centre developer may hold a signed contract for 50 MW of utility service, but the connected load, the amount the local substation can actually deliver today, may be 12 MW. The gap is the substation upgrade timeline, and it is measured in years.

Modular data centre manufacturers are responding by integrating more of the electrical infrastructure into the factory-built package. The UNICOM Engineering and Fourier Cooling collaboration, announced on 28 April from Plano and Austin, Texas, exemplifies the approach. The two companies are delivering what they describe as cooling-defined modular AI infrastructure, where liquid-cooled platform design and direct-to-chip cooling are integrated at the factory rather than retrofitted on site. The cooling loop is not an afterthought bolted onto a server rack; it is the organising principle of the module.

This cooling-first philosophy represents a genuine departure from the air-cooled era. In a traditional data centre, cooling is a building-level system: chillers on the roof, air handlers on the floor, raised-floor plenums distributing cold air. In a cooling-defined modular architecture, each sealed rack or row contains its own liquid loop, and the building envelope becomes a weather shelter rather than a thermal-management system. The shift reduces the construction scope that must happen on site and moves more of the quality-critical work into the factory.

Power density is pushing in the same direction. A single Nvidia GB200 NVL72 rack can draw upwards of 120 kW. Air cooling cannot remove that much heat from a single cabinet at any economically sensible air velocity. Direct-to-chip liquid cooling is not optional for these deployments; it is prerequisite. And direct-to-chip cooling, with its manifolds, quick-connects, coolant-distribution units, and leak-detection systems, benefits enormously from factory integration. A poorly torqued fitting that leaks in the field takes down a multi-million-dollar compute cluster. The same fitting torqued and tested on a factory jig is a known quantity.

The buildout is also forcing a reconsideration of what the building is actually for. A traditional data centre is a building that contains computers. A modular data centre, particularly in its cooling-defined variant, is a computer that happens to have a weatherproof skin. The distinction matters because it changes who can build it. General contractors who pour concrete and hang drywall are not natural manufacturers of liquid-cooled server enclosures. That work belongs to firms like CDM, MCG, and Fourier, companies that sit at the intersection of industrial manufacturing and mission-critical engineering.

A further consequence of the modular shift is geographic. Factory-built modules can be manufactured wherever labour, materials, and logistics converge favourably, then shipped to sites where construction labour is scarce or expensive. This decoupling of manufacturing location from deployment location is new in the data centre industry, which has historically built on site because the building was too large and too integrated to move. The truckable module changes the economic geography of the sector, and the acquisitions in Chantilly and McKinney are early moves in a consolidation that will determine where the manufacturing capacity concentrates.

Edge deployments add a final dimension to the modular logic. A modular unit designed for a hyperscale campus can be scaled down to a single-rack enclosure for a factory, a cell-tower site, or a port authority. The CRN AI 100's inclusion of edge-native infrastructure, from AMD's embedded processors to Cisco's edge networking platforms to Acer's mini-workstations, signals that the same supply chain that serves the 200 MW campus is being asked to serve a thousand 200 kW sites. The economics only work if those thousand sites receive factory-integrated modules rather than bespoke on-site builds.

The checkpoint to watch is the second half of 2026, when the current wave of modular manufacturing capacity expansions, CDM's enlarged Virginia facility, MCG's Texas switchgear line, Fourier's cooling-integration capacity, begins delivering against the order book. If lead times for prefabricated data centre modules contract meaningfully, the industry will have evidence that the factory model works at scale. If they do not, the constraint will have moved elsewhere, perhaps to the substation, perhaps to the fibre, perhaps to the specialised labour pool that understands how to commission a direct-to-chip liquid loop. One of those will become the new critical path, and the market will name it by the end of the year.