Input Methods Evolve Faster Than Accessibility Can Keep Up

For Meta's sEMG wristband and Neuralink's thought-controlled gaming, frictionless control is the promise—but the friction, it turns out, is the point.

storage.googleapis.com

storage.googleapis.com

On a Tuesday morning in a Stockholm apartment, Lena — a 54-year-old former graphic designer with essential tremor — lifts her hand toward her partner's Vision Pro, borrowed for the hour. She is trying to select a photo. The headset's outward-facing cameras track her pinch gesture, or try to: her tremor registers as a cascade of micro-gestures, the cursor jumping between three thumbnails before selecting the wrong one. She lowers her hand, exhales, and says what anyone who has spent time with spatial computing already knows: 'It assumes my hands are steady.' No one in the demo video had a tremor. No one in the demo video was sixty-two, or had arthritis, or was missing a finger. The demo video was shot in a room with diffuse 5500K lighting and zero coffee cups on the table. Lena's kitchen has a window that faces east.

The input landscape is shifting faster than at any point since the capacitive touchscreen ate the QWERTY keyboard. In the past eighteen months, the industry has delivered or previewed: Meta's surface electromyography (sEMG) wristband, which reads neural signals at the wrist to detect finger movements you do not visibly make; Neuralink's implant that let a paralyzed British Army veteran raid dungeons in World of Warcraft using thought alone; Apple's hand-and-eye tracking on Vision Pro, now shipping to anyone with $3,499; Samsung's Galaxy XR with its own gesture lexicon; and a first-of-its-kind commercial brain implant approval out of China. Each of these is presented as a step toward interfaces that are more 'natural' than tapping glass. Natural for whom, exactly, is the question worth asking before the defaults get baked into silicon.

Meta's sEMG wristband is the most interesting of the bunch because it is the most mundane. It looks like a watch strap with electrodes. You wear it on your forearm, and it picks up the electrical signals your brain sends to your hand muscles — signals that fire even if your hand is resting in your lap, or in your pocket, or cannot physically move the way a demo requires. In March, Meta announced it was funding six external university teams to study how people learn and adapt to sEMG input, selecting them from seventy global applicants, as Scott Hayden reported at Road to VR. The research, according to Meta's blog post, covers 'motor learning, sensory feedback, and user adaptation.' The phrase 'user adaptation' is doing a lot of work there. It implies the user will adapt to the device, not the other way around — and for a technology that detects micro-volt muscle signals, the gap between what a healthy twenty-eight-year-old engineer's forearm produces and what a seventy-year-old's produces after a stroke is not a gap you close with a calibration wizard.

What makes EMG genuinely promising for accessibility is that it does not require visible movement. Someone with a spinal cord injury who retains some motor neuron activity in the forearm — a not-uncommon scenario in incomplete injuries — could theoretically generate clean sEMG signals for clicking, scrolling, and typing without ever lifting a finger. The problem is that 'theoretically' is where most of these technologies live. Meta's current hardware was developed for AR glasses interaction, a consumer use case where the primary design constraint is that you should not look weird gesturing at your glasses in a café. Making the leap from 'socially acceptable pinch' to 'reliable input method for someone with spasticity' requires the kind of sustained clinical validation that consumer electronics companies are not set up to fund. The six university grants are a start, but they are academic studies, not product commitments.

Then there is the brain-computer interface cohort, led by Neuralink and Synchron, where the accessibility story is simultaneously the strongest and the most precarious. In late March, Neuralink identified its eighteenth trial participant, Jon L. Noble, a British Army veteran paralyzed below the neck from a spinal cord injury. Noble was raiding in World of Warcraft — moving a cursor, targeting enemies, coordinating with a party — entirely through decoded neural signals, just eighty days after surgery. The video is genuinely affecting. It is also the kind of narrative that makes people talk about keyboards and screens becoming 'obsolete,' as a March TechTimes piece speculated. Keyboards are not becoming obsolete. A $40,000 brain surgery plus an implanted chip plus a machine-learning pipeline that needs recalibration every few hours is not an upgrade from a $20 Logitech.

The risk with BCI narrative capture is that it makes input accessibility look like a solved problem — or worse, a problem that only requires a sufficiently advanced implant. In reality, the population that can access an invasive BCI is vanishingly small: you need a specific neurological profile, a surgeon willing to operate, a hospital with the imaging and monitoring infrastructure, and either a clinical trial slot or a sum of money no insurance code currently covers. Science Corp., the startup founded by former Neuralink president Max Hodak, is preparing its own first-in-human sensor — a device that sits on the retina rather than in the cortex — which sidesteps some of the surgical risk but introduces its own exclusion criteria.

We have patients waiting six months for a custom wheelchair cushion. The gap between a Neuralink press release and their daily reality is measured in decades, not years.— Michaela Thorpe, rehabilitation engineer, Karolinska Institutet

On the spatial-computing side, Apple's hand-and-eye-tracking input model — introduced with Vision Pro in early 2024 and now refined through visionOS 26 — has been in users' hands long enough to generate more than anecdotal data. In March, the blind-and-low-vision advocacy community AppleVis released its 2025 Apple Vision Accessibility Report Card, and the results were not gentle. As Marcus Mendes detailed at 9to5Mac, Apple's grades dropped across multiple categories. The new 'Liquid Glass' design language — a frosted translucent aesthetic that looks beautiful in keynote slides — was called out by respondents for reducing contrast, making interface elements harder to distinguish for users with low vision. One survey participant wrote that Liquid Glass 'feels like someone fogged the lens I already struggle to see through.' The report also flagged persistent bugs in VoiceOver, the screen reader that blind users depend on to navigate visionOS — bugs that have gone unfixed through multiple OS updates.

The Vision Pro input model is built on a triangle of interdependent sensors: outward-facing cameras that track hand position, inward-facing cameras that track gaze direction, and an operating system that fuses the two into a pointing-and-clicking metaphor. You look at something, you pinch, it selects. It works smoothly in a well-lit room with two working eyes and two working hands. It works less smoothly if you have nystagmus, or a prosthetic eye, or one hand, or hands that tremor, or if you are sitting in a room with a window behind you that blows out the IR illuminators. Every layer of the stack is an exclusion point. Gaze tracking alone fails for roughly 6% of the population — strabismus, amblyopia, monocular vision, severe astigmatism — and that is before you get to the hand-tracking part.

What is under-discussed is the second-person experience: what it is like to be near someone using these input methods. A person wearing a Vision Pro and navigating with hand gestures looks, from across the dinner table, like someone performing a mime routine they are not enjoying. A person using voice dictation in an open-plan office is broadcasting half their Slack messages to the row behind them. A person wearing an sEMG wristband that detects sub-vocal muscle signals looks like they are doing nothing at all — which is the point, and also the problem, because their partner has no idea whether they are clicking through a work email or ignoring a direct question. Input methods are social technologies. The demo never shows the person sitting next to the user.

Voice control has become the default accessibility bridge for most new platforms, and it is the least bad option for a significant subset of users with motor impairments. Modern on-device speech recognition — Apple's, Google's, Meta's — is fast enough and accurate enough to replace a keyboard for many tasks, and voice does not require a specific hand posture or gaze vector. But voice breaks down in exactly the situations where computing is most ambient: in a shared bedroom, on public transit, in a meeting, anywhere you do not want to narrate your interaction aloud. It also breaks down for the roughly 1 in 14 people with a speech sound disorder, and for anyone who speaks with an accent the training data underrepresents. A 2025 Stanford HCI study found that commercial speech recognition systems had word error rates two to four times higher for speakers with dysarthria than for controls — and dysarthria is common after stroke, traumatic brain injury, and in Parkinson's disease, precisely the populations who might rely on voice as their primary input.

China's approval in March of the first commercial brain implant for spinal cord injury patients — a device from NeuCyber Neurotech, a Beijing-backed firm that publicly acknowledged it is roughly three years behind Neuralink, as reported by Reuters — opens a regulatory lane that the United States and Europe have not yet built. Whether that accelerates accessibility or merely accelerates the bypassing of safety review is an open question. The device costs the equivalent of roughly $28,000 in the Chinese market, still far beyond what most spinal cord injury patients can pay out of pocket, but within the range that a national health system could conceivably subsidize if outcomes data justify it. No such subsidy framework exists yet.

Samsung's Galaxy XR, launched in late 2025, takes a different approach: it supports hand tracking and eye tracking, but also ships with a physical handheld controller, and its 'PC Connect' feature — which beams a Windows desktop into the headset, as reported by 9to5Google in December — means users can fall back to a keyboard and mouse for work that requires precision. That fallback is itself an accessibility feature, even if Samsung does not market it as one. The option to use a familiar, high-precision input method without penalty is what keeps a platform usable for someone whose hands do not match the tracking model. It is also the option that the 'pure' gesture platforms are ideologically committed to eliminating.

There is a pattern here, and it is not subtle. Each new input paradigm is demoed by people whose bodies work the way the engineers' bodies work. The training data for hand-tracking models is collected disproportionately from young, able-bodied workers in well-lit offices in California and Shenzhen. The sEMG algorithms are tuned on forearms without edema, without surgical scars, without the muscle atrophy that accompanies decades of reduced mobility. The BCI trials enroll participants who are paralyzed but otherwise medically stable — no one with a history of seizures, no one on blood thinners that make cranial surgery riskier. The exclusion criteria that make the trials clean also make the results unrepresentative of the population that could benefit most. This is not malice. It is the physics of convenience.

What would change if accessibility were a design constraint from the first sprint rather than a compliance checklist at launch? Hand-tracking systems that tolerate tremor could use temporal smoothing and adaptive dead zones — techniques well-known from assistive mouse software, rarely implemented at the OS level in spatial computing. sEMG bands could ship with calibration profiles for common motor conditions, the way hearing aids ship with presets for different hearing-loss curves. Gaze-tracking systems could accept head-pose input as a fallback when eye tracking fails, which it always does for someone. None of this is technically exotic. All of it requires product teams to spend time with users whose bodies embarrass the marketing narrative.

The most telling statistic I have encountered in this beat is not from a paper. It is from the AppleVis report card: the average satisfaction score from blind and low-vision users for visionOS dropped from a B- in 2024 to a C+ in 2025. This is a platform that Apple — the company that has built its brand in part on accessibility leadership — is actively investing in and updating. If the scores are falling, it is because the pace of visual redesign is outstripping the pace of accessibility engineering. The inputs are getting fancier. The exclusion is getting wider. Those two facts are not coincidental.

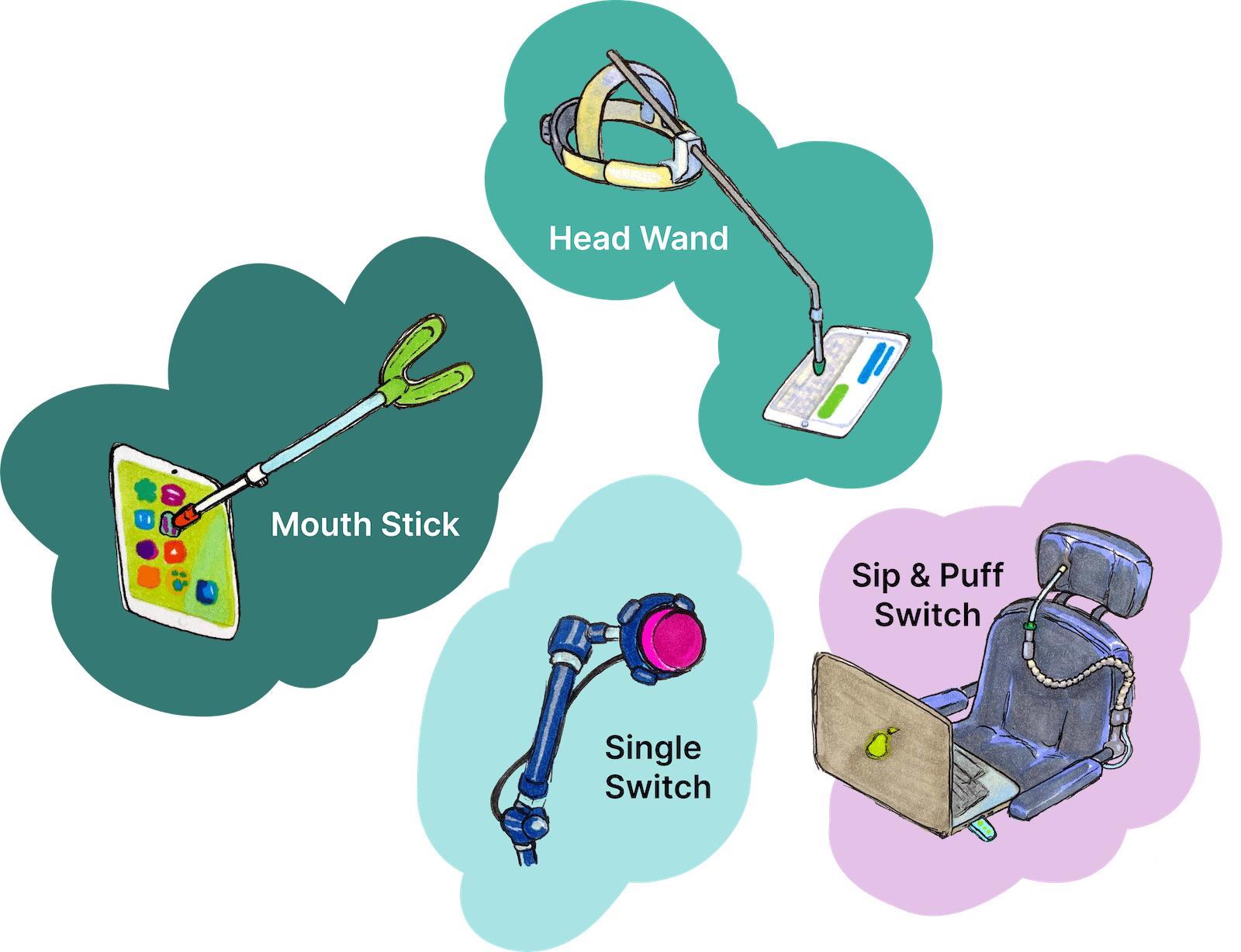

For now, the most accessible new input method remains the one that lets you stop using it and pick up a keyboard or a switch interface or a sip-and-puff controller — the assistive technologies that decades of disability advocacy have made robust, interoperable, and boring. Boring is underrated. A sip-and-puff device works if the lighting is bad, if your voice is tired, if your tremor is bad today, if the neural decoder needs recalibration. It works because it was designed by people who could not afford to assume the user's body was a perfect sensor. The next generation of input methods has not yet learned that lesson. Whether it does will be visible not in press releases, but in the appliance-like reliability assessments that no product reviewer performs: Does it work in a kitchen with an east-facing window? Does it work when your partner is talking? Does it work when your hands are having a bad day? Check back in two years.